Apr 16, 2026

Computer Vision AI: How Machines See and Shape Our World

Explore the transformative power of computer vision AI. This data-driven guide covers core concepts like CNNs, object detection, and real-world applications.

Beyond the Pixels: How AI Learns to See

The global computer vision technology market reached a significant $19.83 billion in 2024, a figure that underscores a fundamental shift in how we interact with the digital and physical worlds. According to recent analysis from Ultralytics, this market is not just growing; it's accelerating at a projected annual rate of 19.8%. This explosive growth is fueled by an insatiable demand for systems that can interpret the visual world with human-like acuity. Every day, billions of cameras-on smartphones, in cars, on factory floors, and orbiting our planet-capture an immeasurable torrent of visual data. This data, in its raw form, is just a collection of pixels. The core challenge, and the immense opportunity, lies in transforming this unstructured visual noise into structured, actionable intelligence.

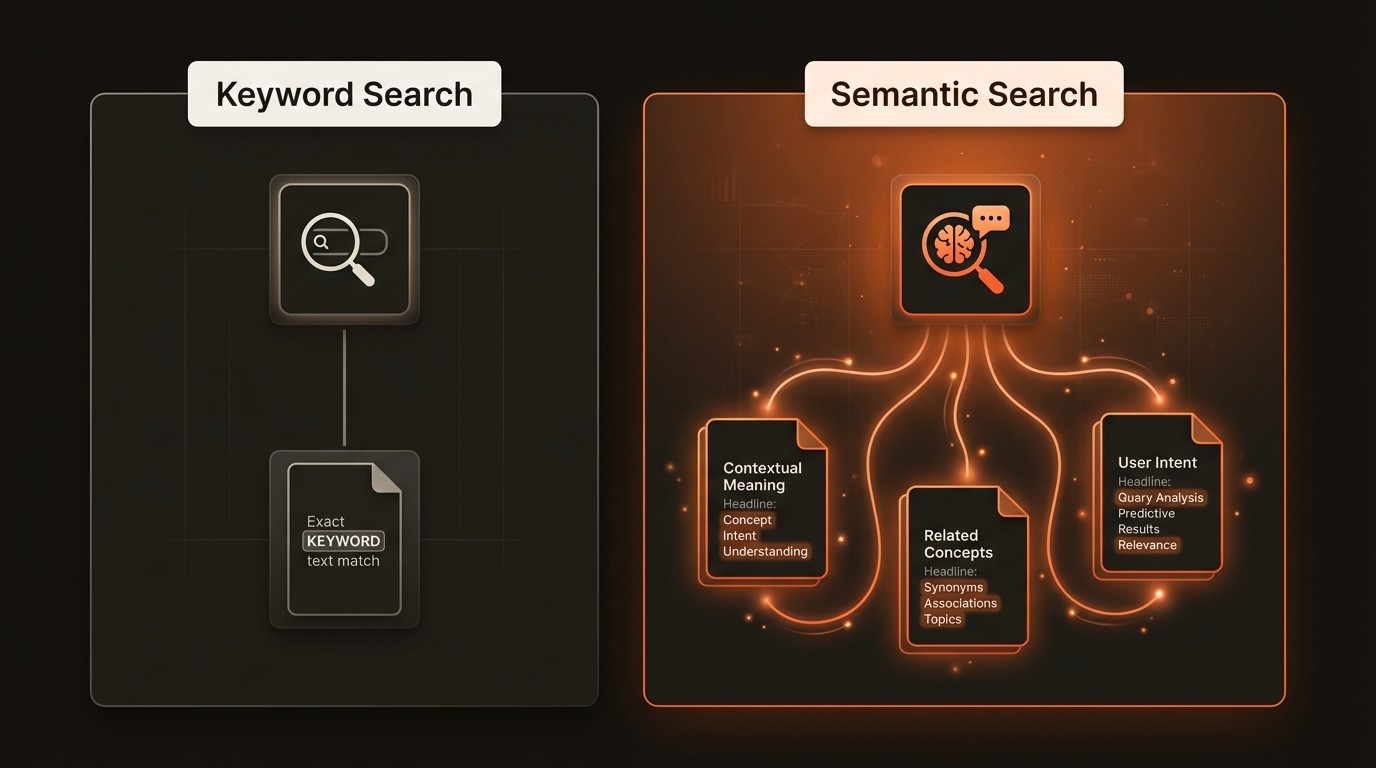

This is where computer vision AI comes into play. It is the scientific discipline focused on enabling machines to derive meaningful information from digital images, videos, and other visual inputs. Unlike simple image processing, which might adjust brightness or apply a filter, computer vision aims for genuine understanding. It seeks to replicate the complexity of human vision, allowing a machine to not only see an object but to recognize what it is, where it is, and what it is doing. This leap from perception to comprehension is what allows an autonomous vehicle to distinguish a pedestrian from a lamppost or a medical AI to identify malignant cells in a biopsy slide. The field is moving beyond academic research and into the core of modern industry, becoming an essential component of automation, analytics, and intelligent systems.

The implications of this technology are profound and far-reaching. We are building systems that can monitor critical infrastructure for defects, guide robotic surgeons with superhuman precision, and create entirely new forms of interactive entertainment. As the algorithms become more sophisticated and the hardware more powerful, the barrier to entry is lowering, allowing developers and businesses to tackle problems that were once the exclusive domain of science fiction. This article explores the foundational concepts that power modern computer vision, examines the real-world challenges it solves, and provides a practical roadmap for harnessing its capabilities. We will investigate the core technologies, from convolutional neural networks to semantic segmentation, and see how they are being applied to drive innovation across diverse sectors.

The Challenge of Unstructured Visual Data

While the potential of computer vision is immense, operationalizing it presents significant technical and logistical hurdles. The primary challenge stems from the nature of visual data itself-it is high-dimensional, unstructured, and context-dependent. Extracting reliable insights requires overcoming several key pain points that organizations face when attempting to deploy AI vision systems at scale. These challenges are not trivial; they represent fundamental barriers that can stall projects and limit the return on investment if not properly addressed from the outset. Understanding these problems is the first step toward building robust and effective solutions.

First, the sheer volume of visual data creates a problem of data overload and manual analysis. A single security camera can generate terabytes of footage in a month, and a modern factory might have hundreds of such cameras monitoring production lines. Manually reviewing this content is not just inefficient; it's impossible. This leads to a scenario where over 90% of captured video data is never analyzed, becoming "dark data" that consumes storage without providing value. The direct consequence is missed opportunities-a security threat goes unnoticed, a critical manufacturing defect is not caught in time, or a valuable customer behavior pattern is overlooked. The labor costs associated with manual review are prohibitive, and the process is too slow to be useful for real-time applications.

Second, even when manual analysis is feasible, it is plagued by the inaccuracy and subjectivity of human interpretation. Human analysts are susceptible to fatigue, distraction, and cognitive biases, which can lead to inconsistent and unreliable results. In a medical context, for example, the interpretation of an MRI scan can vary between radiologists, potentially affecting a patient's diagnosis. In quality control, what one inspector flags as a minor blemish, another might pass. This lack of standardization makes it difficult to enforce consistent quality standards and can introduce significant errors into critical processes. Machines, when properly trained, can apply the same criteria with perfect consistency, 24/7, eliminating the variability that undermines human-led analysis.

Third, many critical applications demand a level of real-time responsiveness that human-in-the-loop systems cannot achieve. An autonomous vehicle must identify and react to a pedestrian stepping into the road in milliseconds. A robotic arm in a logistics warehouse needs to instantly recognize and grasp the correct item from a moving conveyor belt. In these scenarios, the latency introduced by sending data to a cloud server for analysis, let alone waiting for a human decision, is unacceptable. This need for low-latency processing is driving the rapid growth of the AI-powered edge computing sector, which is expected to maintain an annual growth rate exceeding 20%. Without real-time capabilities, the application of computer vision is limited to offline, analytical tasks, excluding a vast range of automation and safety-critical use cases.

The Architectural Pillars of AI Vision

Modern computer vision is built upon a foundation of sophisticated machine learning models and techniques that have evolved dramatically over the past decade. At the heart of this revolution are deep learning architectures that can automatically learn hierarchical features from vast amounts of visual data. Understanding these core components is essential for anyone looking to build or deploy AI vision systems. These are not just abstract concepts; they are the practical tools that engineers use to solve complex visual understanding tasks, from simple classification to detailed scene interpretation.

Convolutional Neural Networks (CNNs): The Brains of the Operation

At the core of most modern computer vision systems is the Convolutional Neural Network (CNN). Inspired by the human visual cortex, a CNN is a type of deep neural network designed specifically for processing pixel data. It works by applying a series of filters (or kernels) to an input image, creating feature maps that highlight specific patterns like edges, corners, and textures. As the data passes through successive layers of the network, these simple features are combined to form more complex representations, such as shapes, object parts, and eventually, entire objects. This hierarchical feature extraction process allows the network to learn a robust and discriminative representation of the visual world automatically, without the need for manual feature engineering.

Object Detection: Finding and Classifying

While a CNN can classify an entire image, many applications require a more granular understanding. This is the role of object detection, a technique that involves identifying the location of specific objects within an image and classifying them. An object detection model outputs a set of bounding boxes, each with a corresponding class label (e.g., "car," "person") and a confidence score. This is achieved by combining a CNN backbone for feature extraction with additional network heads that propose potential object regions and then classify those regions. Popular architectures like YOLO (You Only Look Once) and Faster R-CNN have made real-time object detection a reality, enabling applications like traffic monitoring and automated surveillance.

Semantic Segmentation: Pixel-Level Understanding

For the most detailed scene understanding, we turn to semantic segmentation. Unlike object detection, which draws a box around an object, semantic segmentation classifies every single pixel in an image. The output is a segmentation map where each pixel is color-coded based on its class (e.g., all road pixels are purple, all building pixels are blue, all tree pixels are green). This provides an incredibly rich, pixel-perfect understanding of the scene's layout and composition. It is a critical technology for autonomous driving, where the vehicle needs to know the exact boundaries of the drivable road surface, and in medical imaging for precisely delineating the shape of a tumor. This granular data can be indexed by advanced platforms like VideoDB to enable highly specific queries, such as finding all video frames containing a person standing on a sidewalk next to a car.

Image Recognition: The Core Classification Task

Often used as a foundational component, image recognition (or image classification) is the task of assigning a single label to an entire image. For example, an image is classified as containing a "cat," a "landscape," or a "portrait." While simpler than detection or segmentation, it is a powerful tool for organizing large visual datasets and is often the first step in more complex analysis pipelines. The global image recognition market is a testament to its widespread adoption, expected to rise to $42.2 billion by the end of the year according to V7 Labs. This technology powers everything from photo-organizing apps to content moderation systems, providing a baseline level of understanding for visual content.

Computer Vision by the Numbers

Here's what the data reveals about the scale and trajectory of the computer vision market:

Metric | Key Figure | Industry Significance |

|---|---|---|

Global Computer Vision Market | **$19.83 billion** in 2024 | Reflects widespread adoption across industries and strong current demand. |

Projected Annual Market Growth | **19.8%** annually | Indicates sustained, high-speed growth and long-term investment in the technology. |

Image Recognition Market Size | **$42.2 billion** by year-end | Highlights the massive commercial value of core classification technologies. |

AI-Powered Edge Computing Growth | Exceeding 20% annually | Shows a critical shift towards real-time, on-device processing to reduce latency. |

Global Facial Recognition Market | **$13.4 billion** by 2028 | Demonstrates significant growth in identity verification and security applications. |

Generative AI Adoption Share | **76%** among AI trends | Signals the growing importance of synthetic data for training more robust models. |

From Raw Pixels to Actionable Intelligence

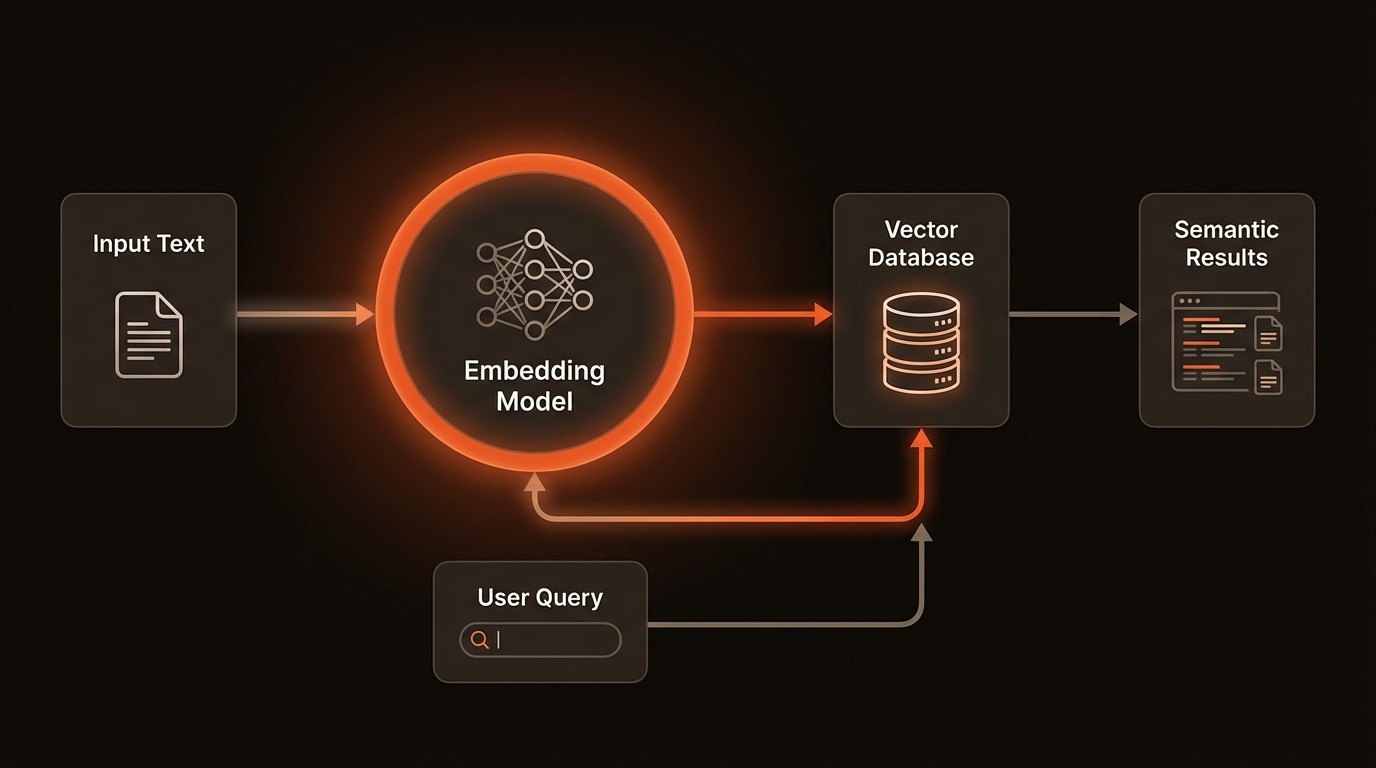

Implementing computer vision effectively is about building a pipeline that transforms raw visual data into structured, queryable, and actionable insights. This involves more than just training a model; it requires a robust infrastructure for data processing, metadata management, and real-time analysis. Modern solutions are increasingly focused on creating end-to-end systems that automate this entire workflow, enabling organizations to deploy AI vision at scale and integrate it seamlessly into their operations. These systems are designed to handle the complexities of visual data and deliver tangible business value.

Automated Feature Extraction and Indexing

One of the most powerful capabilities of modern computer vision is its ability to automatically extract rich, descriptive metadata from images and videos. Instead of relying on manual tags, AI models can identify and label objects, recognize faces, transcribe text, detect specific scenes, and even understand actions. This structured metadata becomes the key to unlocking the value of a visual archive. For example, a media organization can automatically catalog its entire library, making decades of footage searchable not just by title, but by the actual content within the video. A system like VideoDB is specifically designed to ingest this AI-generated metadata and index it for complex, high-speed queries, turning a passive video archive into an active, intelligent asset and reducing manual effort by over 95%.

Real-Time Analysis at the Edge

The demand for immediate insights has pushed processing from the centralized cloud to the network edge. Edge computing involves deploying AI models directly onto devices like smart cameras, drones, or on-premise servers. This architecture is critical for applications where latency is a major concern. A smart factory, for instance, can use cameras on an assembly line to run a defect detection model in real-time. When a faulty product is identified, the system can instantly trigger an alert or stop the conveyor belt, preventing the issue from propagating. This approach, which is driving the AI edge computing sector's growth of over 20% annually, also reduces bandwidth costs and enhances data privacy by keeping sensitive information on-site.

Generative AI for Data Augmentation and Synthesis

A significant bottleneck in developing high-performance computer vision models is the availability of large, diverse, and well-labeled training datasets. Generative AI offers a powerful solution to this problem. Models like Generative Adversarial Networks (GANs) or diffusion models can create realistic, synthetic images and video clips to augment existing datasets. This is particularly useful for training models to recognize rare events or dangerous scenarios that are difficult to capture in the real world. An autonomous vehicle developer can generate thousands of photorealistic simulations of accidents or unusual road hazards, creating a more robust and resilient perception system. This ability to synthetically generate data is a key reason why generative AI now accounts for a 76% share of usage among top AI trends.

Computer Vision in Practice

The theoretical capabilities of computer vision are impressive, but its true impact is realized through its practical application across various industries. From making our roads safer to improving healthcare outcomes, AI vision is being deployed to solve tangible problems and create new efficiencies. These real-world use cases demonstrate the technology's versatility and its ability to deliver measurable results when integrated thoughtfully into existing workflows and processes.

Autonomous Vehicles: Navigating the World

In the automotive industry, computer vision is the cornerstone of autonomous driving technology. Self-driving cars and advanced driver-assistance systems (ADAS) rely on a suite of cameras to perceive and understand their environment in real-time. This is not a simple task; it requires a multi-layered visual understanding.

Specifically, object detection models are used to identify and track other vehicles, pedestrians, cyclists, and traffic signs. Simultaneously, semantic segmentation provides a pixel-level map of the surroundings, clearly delineating drivable road surfaces, lane markings, sidewalks, and obstacles. As noted by Ultralytics, this capability is critical for safe navigation. The data from these systems feeds into the car's decision-making algorithms, enabling it to accelerate, brake, and steer safely. The measurable outcome is a reduction in human error, which is a factor in over 90% of traffic accidents, paving the way for a future with safer, more efficient transportation.

Healthcare: Enhancing Diagnostic Accuracy

Computer vision is transforming medical imaging, acting as a powerful tool for radiologists and clinicians. Medical scans such as MRIs, CT scans, and X-rays contain vast amounts of complex visual information that can be challenging for the human eye to interpret consistently.

AI models, trained on millions of annotated medical images, can analyze these scans to detect and highlight potential anomalies, such as tumors, lesions, or fractures. For example, a deep learning model can segment a brain tumor from an MRI scan with high precision, allowing doctors to measure its volume and plan treatment more effectively. This doesn't replace the radiologist but rather augments their expertise, acting as a tireless second reader. The result is improved diagnostic accuracy, a reduction in analysis time by up to 30-40% in some cases, and the potential for earlier detection of life-threatening diseases, leading to better patient outcomes.

Retail: Optimizing Store Operations and Experience

Brick-and-mortar retailers are leveraging computer vision to bring the data-driven insights of e-commerce into the physical store. By analyzing video feeds from in-store cameras, retailers can gain a deep understanding of customer behavior and optimize store operations.

Implementations include tracking foot traffic to identify popular zones and bottlenecks, allowing for improved store layouts. Shelf-monitoring systems can automatically detect when a product is out of stock and send an alert to staff, preventing lost sales. Some retailers are using vision systems to analyze customer demographics and dwell times at specific displays to measure the effectiveness of marketing campaigns. With the facial recognition market projected to reach $13.4 billion by 2028, frictionless checkout systems that identify customers and process payments automatically are also becoming more common. These applications lead to increased operational efficiency, better inventory management, and a more personalized and seamless customer experience.

A Practical Roadmap to AI Vision

Adopting computer vision technology can seem daunting, but a structured, phased approach can de-risk the process and ensure that initiatives are aligned with business objectives. The key is to start with a well-defined problem and iterate, building complexity and scale over time. This roadmap outlines five key steps for successfully integrating AI vision into your operations.

Define a High-Impact Use Case: Before writing any code or buying any hardware, identify a specific, measurable business problem that computer vision can solve. This could be reducing defects on a production line, automating a manual inspection process, or improving security monitoring. Quantify the current state by gathering baseline metrics, such as error rates or processing times. A clear definition of success will guide the entire project and make it easier to demonstrate ROI.

Data Collection and Preparation: High-quality data is the lifeblood of any machine learning system. Begin by collecting a representative dataset of images or videos that capture the full range of conditions your model will encounter in the real world. This includes different lighting, angles, and edge cases. This data must then be accurately labeled (or annotated) to train the model. This is often the most time-consuming part of the process, but its importance cannot be overstated, as data quality directly impacts model performance.

Model Selection and Fine-Tuning: You don't need to build a model from scratch. Leverage state-of-the-art, pre-trained models like YOLOv8 or ResNet and fine-tune them on your specific dataset. This process, known as transfer learning, requires significantly less data and computational resources than training a model from zero. Choose a model architecture that balances the required accuracy with the computational constraints of your deployment environment (e.g., a lightweight model for an edge device).

Integration and Deployment: Once the model is trained and validated, it must be integrated into a production workflow. This involves deploying it on the target hardware-be it a cloud server, an edge device, or an embedded system. For applications involving large volumes of video, this is the stage where you would integrate with a specialized video database like VideoDB. This allows you to efficiently store, index, and query the metadata generated by your model, making the insights accessible to downstream applications.

Monitor, Iterate, and Scale: A computer vision model is not a one-and-done project. Once deployed, its performance must be continuously monitored to detect concept drift-where the model's accuracy degrades as real-world conditions change. Establish a feedback loop to collect new data, particularly on instances where the model fails. Use this data to periodically retrain and redeploy the model, ensuring it remains accurate and reliable over time. This iterative process is key to long-term success and scaling the solution across the organization.

Common Questions About Computer Vision AI

Q: What is the difference between computer vision and image recognition?

A: Image recognition is a subset of computer vision. It focuses on identifying and categorizing an entire image with a single label, such as 'cat' or 'dog'. Computer vision is a broader field that encompasses not only recognition but also more complex tasks like object detection (locating multiple objects in an image), semantic segmentation (classifying every pixel), and motion analysis. Think of image recognition as identifying the main subject, while computer vision aims to understand the entire scene in detail.

Q: How much data is needed to train a computer vision model?

A: The amount of data required varies greatly depending on the complexity of the task and the approach used. Training a model from scratch can require millions of images. However, by using transfer learning-fine-tuning a pre-trained model-you can often achieve excellent results with just a few hundred or a few thousand labeled examples. The key is the quality and diversity of the data, not just the raw quantity.

Q: Can computer vision work in real-time?

A: Yes, real-time performance is a key focus of modern computer vision. Highly optimized models like the YOLO family can perform object detection on a live video stream at 30 frames per second or higher on appropriate hardware. Achieving real-time performance often involves a trade-off between model accuracy and speed, and it typically requires specialized hardware like GPUs or dedicated AI accelerators, especially for deployment on edge devices.

Q: What are the biggest challenges in computer vision today?

A: Despite significant progress, several challenges remain. Handling occlusions (where objects are partially hidden), variations in lighting and weather, and recognizing objects in cluttered scenes are ongoing areas of research. Another major challenge is the 'long tail' problem-training models to recognize rare objects or events for which there is very little training data. Finally, ensuring models are robust against adversarial attacks (subtle manipulations to an image designed to fool the model) is a critical concern for security applications.

Q: Is computer vision expensive to implement?

A: The cost can range from very low to very high. Using off-the-shelf APIs for simple tasks can be quite affordable. A custom, large-scale implementation will involve costs for data collection and labeling, model training (which can be computationally expensive), and deployment hardware. However, the return on investment from automation, improved quality control, and new data insights often far outweighs the initial setup costs, especially when the solution is targeted at a high-value business problem.

Key Takeaways

The computer vision market is experiencing rapid growth, projected to expand by 19.8% annually, driven by its adoption across major industries.

Core technologies like Convolutional Neural Networks (CNNs), object detection, and semantic segmentation are the building blocks of modern AI vision systems.

Real-world applications in autonomous vehicles, healthcare, and retail demonstrate the technology's ability to improve safety, accuracy, and efficiency.

The shift towards AI at the edge is critical for enabling real-time applications that require low latency and data privacy.

Successful implementation follows a structured path: defining a clear use case, preparing high-quality data, leveraging pre-trained models, and establishing a continuous monitoring and iteration loop.