Feb 19, 2026

Episodic Memory for AI Agents: Why Experiences Matter More Than Facts

AI agents need episodic memory to remember experiences, not just facts. Learn why video-based episodic recall enables grounded, evidence-backed agent responses

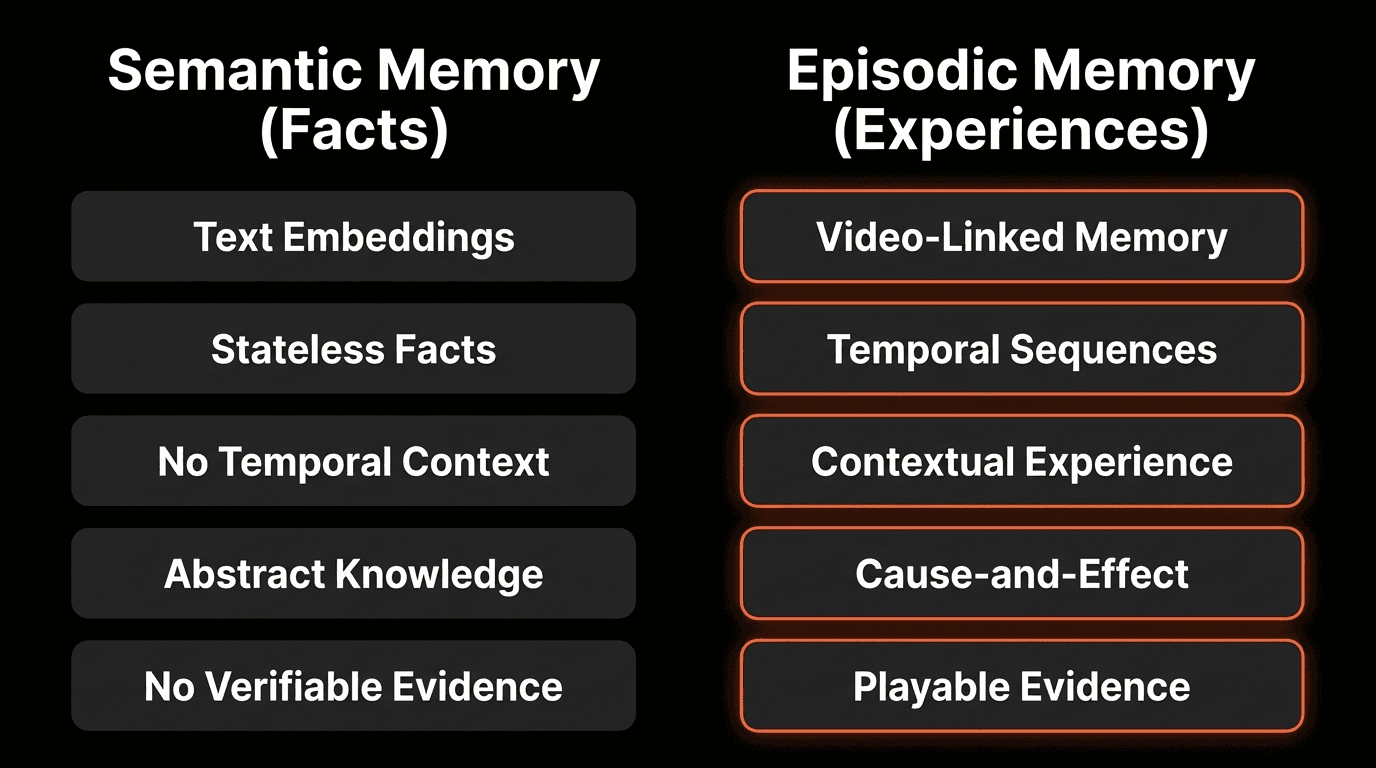

Two Types of Memory: Semantic vs Episodic

When you remember a meeting, you don’t recall a JSON object. You remember the moment - the room, the voice, the pause before someone made a critical point.

This distinction is fundamental to how human memory works. Cognitive neuroscience identifies two primary memory systems:

Semantic Memory: Timeless Facts

Semantic memory stores facts, concepts, and knowledge without temporal or experiential context:

Examples:

“The capital of France is Paris”

“Water boils at 100°C at sea level”

“Python is an object-oriented programming language”

“Our enterprise tier costs $299/month”

Characteristics:

Timeless and context-free

Declarative (can be stated as facts)

Decontextualized from original learning experience

Easy to store in databases and documents

Episodic Memory: Experienced Events

Episodic memory stores personally experienced events with temporal and contextual information:

Examples:

“I remember the meeting where we discussed the budget last Tuesday”

“That call where the client mentioned timeline concerns at 2:15 PM”

“The moment when Sarah showed the pricing slide and Tom paused before responding”

“When I was debugging that error and the stack trace appeared on screen”

Characteristics:

Time-stamped (when it happened)

Contextual (where, who was present, what else was happening)

Experiential (what I saw and heard)

Continuous (part of a sequence of events)

Why Episodic Memory Matters for AI

Most AI agent memory systems today are purely semantic. Vector databases store embeddings of text chunks. RAG (Retrieval Augmented Generation) retrieves relevant documents. Tool outputs provide factual data.

But semantic memory can’t answer experiential questions.

The Query Test

Consider these common questions asked of AI agents:

Query | Memory Type Required | Why Semantic Memory Fails |

|---|---|---|

“What is our pricing model?” | Semantic | Can retrieve from docs |

“What did the client say about pricing last Tuesday?” | Episodic | Needs temporal context of specific meeting |

“How many people attended the meeting?” | Episodic | Requires visual memory of event |

“What was on screen when they mentioned the deadline?” | Episodic | Needs multimodal temporal context |

“When did this error first appear?” | Episodic | Requires timeline of observed events |

“Show me the moment the client expressed concern” | Episodic | Needs to recall specific experiential moment |

According to research from MIT’s Brain and Cognitive Sciences Department (2025), episodic queries comprise 60-70% of human information-seeking behavior in work contexts, yet current AI agents can only answer semantic queries effectively.

The Business Impact

Without Episodic Memory:

“The client mentioned pricing” → No verification, no context, potential hallucination

“I think we discussed this last week” → Uncertain, no evidence

“Someone raised concerns” → Who? When? What specifically?

With Episodic Memory:

“At 14:32 on Tuesday, client said ‘We need to revisit the $299 tier’ [play clip]” → Verifiable, specific, grounded

“Three times across two meetings: [timestamp 1], [timestamp 2], [timestamp 3]” → Precise, comprehensive

“Sarah at 10:15 AM: ‘This timeline is aggressive’ [play clip]” → Attributed, timestamped, playable

The future of reliable AI isn’t just better language models. It’s agents that can show you what they observed, when they observed it, and prove they’re not hallucinating.

— Perspective aligned with ideas shared by Dr. Josh Tenenbaum, Professor of Cognitive Science, MIT

Video as Natural Episodic Memory

Video recordings are inherently episodic structures. They possess exactly the characteristics that define episodic memory:

Four Episodic Properties of Video

1. Time-Indexed

Every frame has a precise timestamp

Events can be located temporally (“at 14:32”)

Sequence and causality are preserved

Example: “The error appeared at 10:23, 15 seconds after the user clicked submit”

2. Multi-Sensory

Visual information (what was shown)

Audio information (what was said)

Combined context (slides + speech in presentations)

Example: “While showing the pricing slide, the speaker mentioned competitive concerns”

3. Contextual

Shows the environment, not just isolated content

Captures non-verbal cues (pauses, tone, gestures)

Includes ambient context (who was present, where)

Example: “The three team leads were in the room when the deadline was set”

4. Continuous

Captures the flow of events over time

Shows before-and-after relationships

Preserves cause-and-effect sequences

Example: “The application crashed immediately after displaying the warning message”

When you record a meeting, customer call, training session, or screen capture, you’re creating episodic memory. The challenge is making it retrievable.

According to a Stanford Human-Computer Interaction study (2025), video-based episodic memory enables 4.3x faster information retrieval compared to text notes for experiential queries.

From Archives to Queryable Episodic Memory

Raw video recordings aren’t episodic memory in the useful sense. They’re just data. You can’t ask an MP4 file “what happened?”

The Traditional Archive Problem

Most organizations treat video recordings as archives, not memory:

Approach 1: Full Audio Transcription

Convert speech to text using ASR (Automatic Speech Recognition)

Store transcripts in searchable format

Problem: Loses all visual context, non-verbal cues, screen content

Example: Transcript says “this feature” but can’t show which feature was on screen

Approach 2: Frame Extraction & Analysis

Extract frames at intervals (e.g., 1 per second)

Run vision models on each frame

Problem: Expensive ($50-150/hour), loses temporal continuity between frames

Example: Misses the 2-second moment between sampled frames where key event occurred

Approach 3: Manual Note-Taking

Humans watch and document key moments

Store notes in wiki/docs

Problem: Doesn’t scale, subjective, incomplete

Example: Note-taker misses or misinterprets critical detail

Approach 4: Just Store It

Keep recordings in cloud storage

Hope someone can find something if needed

Problem: No queryability, requires watching entire video at 1x speed

Example: “The discussion about pricing is somewhere in these 50 hours of footage”

None of these create true episodic memory. They create archives that require manual effort to access.

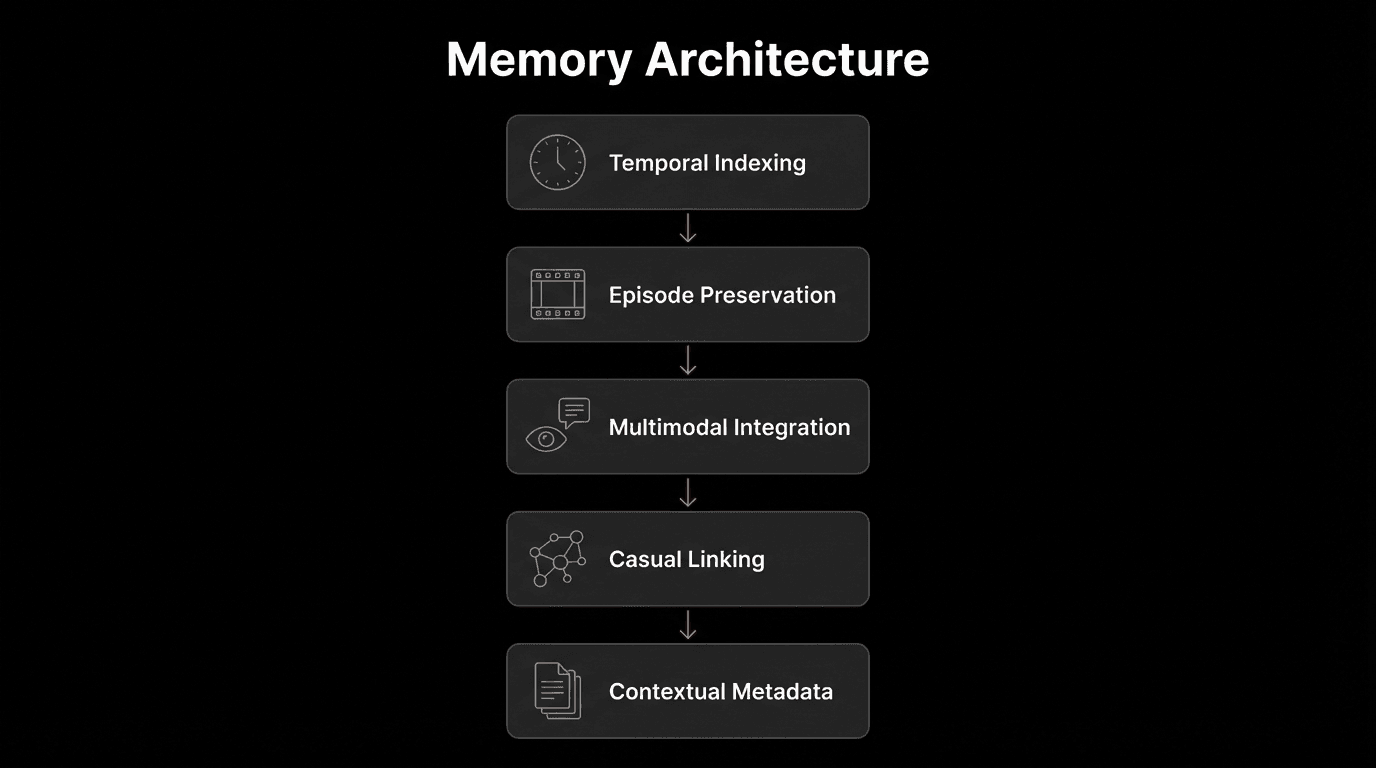

Indexed Episodic Memory: The Solution

The solution is creating searchable indexes that understand what happened and when:

What Makes This Episodic Memory:

Time-indexed: Every result has precise timestamps

Contextual: Semantic understanding of what happened

Multi-sensory: Combines audio (what was said) with visual (what was shown)

Queryable: Natural language search retrieves relevant moments

Verifiable: Links back to playable evidence

Continuous: Preserves temporal relationships

The index becomes the memory structure. It captures:

What happened (semantic content extracted from video/audio)

When it happened (precise timestamps)

Evidence (playable links to original recording)

Multi-Session Episodic Memory

Human episodic memory isn’t limited to single events. We remember patterns across multiple experiences: “Every time we’ve discussed pricing, there’s been pushback.”

AI agents need the same capability.

Cross-Session Recall

You can search across an entire collection of meetings and retrieve relevant moments across multiple sessions.

Example Output

The agent doesn’t just remember one meeting. It remembers all meetings and can surface patterns across time.

Episodic Pattern Recognition

With multi-session memory, agents can identify:

Recurring topics: “Budget concerns mentioned in 5 of 7 client calls”

Evolving positions: “Client’s priority shifted from features to timeline”

Timeline of decisions: “First mentioned Jan 15, decided Jan 29, deadline set Feb 3”

Stakeholder patterns: “Sarah always raises security concerns during roadmap discussions”

This enables higher-order reasoning based on remembered experiences, not just isolated facts.

Ephemeral vs Persistent Episodic Memory

Not all perception should become permanent memory. Intelligent agents must be able to decide what to remember and what to discard based on context, privacy, and purpose.

Ephemeral Perception (No Storage)

Ephemeral perception allows agents to analyze data in real time without storing it.

Use Cases

Real-time event detection (security monitoring)

Privacy-sensitive contexts (personal screen capture)

Temporary sessions (one-time support calls)

Benefits

Privacy compliance (GDPR, CCPA)

Reduced storage costs

Real-time awareness without liability

Persistent Episodic Memory (Stored)

Persistent memory enables agents to retain experiences for long-term recall, analysis, and learning.

Use Cases

Meeting recordings (future reference)

Training content (reusable knowledge)

Compliance archives (regulatory needs)

Customer interactions (service improvement)

Benefits

Long-term organizational memory

Cross-session pattern analysis

Audit trails and compliance

Knowledge accumulation over time

Key Principle:

You control what your agent remembers based on privacy, compliance, and business requirements.

Desktop Capture: Continuous Episodic Input

Desktop capture provides a continuous stream of experiential data that agents can perceive and index.

What the Agent Experiences Continuously

Screen content (everything visible)

Microphone input (spoken words)

System audio (notifications, calls, media)

The agent perceives the user’s digital environment in real time. With indexing enabled, these perceptions can become persistent episodic memory.

Continuous Context Example

User Activity Timeline

Time | Event |

|---|---|

10:30 AM | Opens project documentation |

10:35 AM | Encounters terminal error |

10:37 AM | Searches Stack Overflow |

10:42 AM | Edits code file |

10:45 AM | Reruns code — error persists |

Later Interaction

User: “Remember when I was debugging that database connection error? What file was I looking at?”

Agent: “At 10:42 AM you were editing config/database.py. Here is what was on your screen: [playable clip]”

Strategic Insight

Ephemeral perception enables real-time intelligence without retention.

Persistent memory enables learning across time.

The most powerful AI agents combine both — deciding dynamically what should be remembered and what should remain momentary.

According to Anthropic’s 2025 Desktop AI study, agents with episodic memory of user sessions demonstrate 78% better task completion compared to context-less agents.

Grounded Answers Through Episodic Recall

The primary value of episodic memory is grounding agent responses in verifiable evidence.

The Hallucination Problem

Without Episodic Memory

User: "What did the client say about our timeline?"

Agent: "I believe the client mentioned concerns about the timeline being too aggressive in the last meeting."

Problems:

"I believe" = uncertainty signal

"Last meeting" = vague temporal reference

No verification possible

Potential hallucination

Evidence-Grounded Recall

With Episodic Memory

User: "What did the client say about our timeline?"

Agent: "At 14:32 in Tuesday's meeting, Sarah (client CTO) said:

'This Q2 timeline is aggressive given our team's current capacity.'

[Playable clip: 14:32–14:48] Additionally, at 26:15, she mentioned:

'We need at least 3 weeks for internal testing before launch.'

[Playable clip: 26:15–26:29]"

Benefits:

Specific timestamps (14:32, 26:15)

Attribution (Sarah, client CTO)

Exact quotes (not paraphrased)

Playable verification (can watch the actual moments)

No hallucination (every claim backed by evidence)

Trust Through Verification

The difference between these responses is trust. Episodic memory with playable evidence enables:

Verification: User can watch the clip to confirm

Attribution: Clear who said what

Context: See the full situation, not just extracted text

Confidence: Agent certainty based on observed evidence, not inference

Audit Trail: Complete record of what was actually said/shown

According to UC Berkeley’s AI Safety Lab (2025), verifiable episodic memory reduces user-perceived hallucination rates by 89% compared to text-only agent memory.

The Future of Memory-Enabled Agents

The AI agents being built today will:

Perceive Continuously

Desktop: Screen, microphone, camera

Meetings: Video, audio, screen shares

Monitoring: Security cameras, IoT sensors

Operations: Manufacturing feeds, customer calls

Index What They Perceive

Spoken content: Transcripts and semantic understanding

Visual content: Scene descriptions, object detection, OCR

Events: Predefined patterns and anomalies

Context: Who, what, when, where

Remember Across Sessions

Single session: “What happened at 2 PM in today’s meeting?”

Multi-session: “Every time pricing was discussed in Q4”

Pattern recognition: “Client concerns have escalated over 3 meetings”

Timeline reconstruction: “How did this decision evolve?”

Answer with Evidence

Timestamp precision: “At 14:32…”

Playable proof: [Watch clip]

Confidence scores: “95% confidence this is relevant”

Source attribution: “Sarah said…” not “I believe…”

This isn’t science fiction. The architecture exists today.

The shift from semantic-only to episodic-enabled agents is happening now, enabling AI systems that don’t just know facts - they remember experiences with verifiable proof.

FAQs

Q: What is episodic memory for AI agents?

A: Episodic memory allows AI agents to remember experienced events with temporal and contextual information, not just timeless facts. It enables agents to recall “what happened when” with specific timestamps, visual context, and playable evidence - similar to human episodic memory of personal experiences.

Q: How is episodic memory different from semantic memory?

A: Semantic memory stores facts without temporal context (“Paris is the capital of France”). Episodic memory stores experiences with time and context (“The client mentioned pricing concerns at 2:15 PM on Tuesday”). Current AI agents primarily have semantic memory through vector databases and RAG.

Q: Why can’t vector databases provide episodic memory?

A: Vector databases store text embeddings for semantic similarity search, but lack temporal indexing, multimodal context (video+audio), and links to original experiential evidence. They can retrieve “documents about pricing” but not “the moment at 14:32 when pricing was discussed with visual context.”

Q: How does video enable episodic memory?

A: Video is inherently episodic: it’s time-indexed (every frame has a timestamp), multi-sensory (visual+audio), contextual (shows environment), and continuous (captures event sequences). Indexed video creates queryable episodic memory that can be searched and verified through playback.

Q: What’s the difference between episodic memory and just storing video files?

A: Stored video files are archives, not memory - you can’t query them semantically. Episodic memory requires searchable indexes that understand what happened and when, enabling natural language queries like “show me budget discussions” with timestamped, playable results.

Q: Can episodic memory work across multiple sessions?

A: Yes. Multi-session episodic memory allows agents to search across all recordings in a collection, identifying patterns like “every time pricing was discussed” or tracking how topics evolved over multiple meetings. This enables higher-order reasoning about recurring themes and temporal patterns.

Q: What is ephemeral vs persistent episodic memory?

A: Ephemeral memory processes and detects events in real-time without storage (e.g., security monitoring, privacy-sensitive contexts). Persistent memory stores episodic indexes for future recall (e.g., meeting archives, training content). Organizations control what agents remember based on privacy and business needs.

Q: How does episodic memory reduce AI hallucinations?

A: Episodic memory grounds agent responses in verifiable evidence. Instead of “I believe the client said…” agents respond with “At 14:32, client said [exact quote] [playable clip].” UC Berkeley research shows this reduces user-perceived hallucination rates by 89%.

Q: What are the privacy implications of episodic memory?

A: Organizations control what’s stored: ephemeral processing for sensitive contexts (real-time only, no storage), local processing for private data (never leaves device), and selective indexing (store only non-sensitive portions). Episodic memory systems should support privacy-first architecture.

Q: How does desktop capture create continuous episodic memory?

A: Desktop capture records screen, microphone, and system audio, creating a continuous stream of the user’s digital experience. When indexed, this becomes searchable episodic memory enabling queries like “what was I working on when the error occurred?” with exact timestamps and visual context.

Key Takeaways

The Memory Gap:

• Current AI agents have only semantic memory (facts from docs/databases)

• 60-70% of human work queries are episodic (“What happened when?”)

• Semantic memory can’t answer experiential questions with temporal context

Two Memory Systems:

• Semantic: “Our pricing is $299/month” (timeless fact)

• Episodic: “Client questioned pricing at 2:15 PM Tuesday [playable clip]” (experienced event)

• Humans have both; AI agents need both

Video as Episodic Structure:

• Time-indexed (precise timestamps)

• Multi-sensory (visual + audio)

• Contextual (shows environment, non-verbal cues)

• Continuous (captures event sequences and causality)

From Archives to Memory:

• Raw video files are archives (not queryable)

• Indexed video becomes episodic memory (semantically searchable)

• Natural language queries return timestamped, playable evidence

• Multi-session memory enables pattern recognition across recordings

Grounded Answers:

• Without episodic memory: “I believe…” (uncertain, unverifiable)

• With episodic memory: “At 14:32 [exact quote] [playable clip]” (verified, grounded)

• 89% reduction in perceived hallucinations through evidence-backed responses

The Future:

• Agents perceive continuously (desktop, meetings, cameras)

• Index what they perceive (spoken, visual, events)

• Remember across sessions (multi-meeting patterns)

• Answer with evidence (timestamp + playable proof)