Feb 19, 2026

From Video Files To Video Memory: The Infrastructure Shift Powering Intelligent Agents

Video sits locked in files, invisible to AI. Discover how transforming video into structured, queryable memory enables agents that can truly see, remember, and recall visual experiences

The Hidden Problem with Video

Your organization probably has more video than you realize. Meeting recordings. Sales calls. Training sessions. Customer interactions. Security footage. Product demos. Hours and hours of rich, contextual information.

And almost none of it is usable by AI.

Video sits in storage as opaque files. To access its contents, you need to watch it manually, scrub through timelines, or hope that basic transcription captures enough. The actual visual information, the context, is locked away.

Today's AI can read your documents. It cannot see your videos.

This is not a minor gap. Video is the richest data your organization produces. It contains the complete context of human interaction: what was said, how it was said, who said it, and what was happening when they said it. Yet for AI systems, video remains a black box.

The shift from video files to video memory is the infrastructure change that unlocks this potential.

What "Video as Data" Actually Means

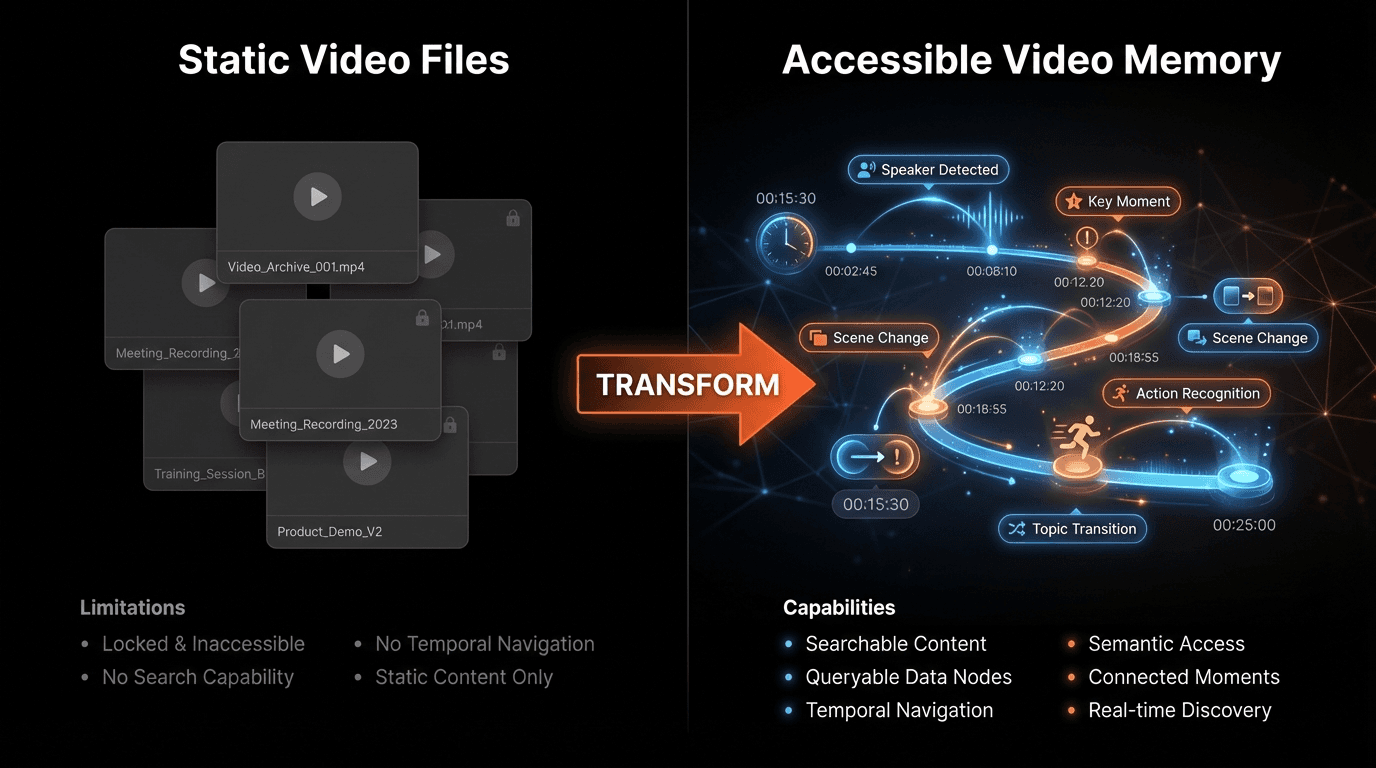

The current paradigm treats video as media: something to be stored, streamed, and watched. File systems hold MP4s. CDNs deliver streams. Players render frames. The video is an artifact, not information.

The emerging paradigm treats video as data: something to be indexed, queried, and reasoned over, just like text in a database.

Video as Files | Video as Data (Memory) |

|---|---|

Stored in file systems | Indexed in queryable databases |

Accessed by timestamp only | Accessed by semantic meaning |

Requires manual viewing | Enables automated understanding |

Opaque to AI systems | Transparent to AI systems |

Process entire files | Query specific moments |

Expensive at scale | Efficient and selective |

No temporal reasoning | Full time-indexed recall |

When video becomes data, AI agents can ask questions like:

"Show me the moment when the customer's expression changed during the pricing discussion"

"Find all instances where the speaker mentioned budget constraints"

"Compare the engagement levels across the three product demos"

"What happened in the five minutes before the system alert was triggered?"

These queries are impossible with video-as-files. They become natural with video-as-data.

"The database revolution happened when we stopped treating text as documents and started treating text as queryable data. The same revolution is now happening with video. Organizations that make this shift will have capabilities their competitors cannot match."

— Perspective aligned with ideas shared by Satya Nadella, CEO of Microsoft

Why Video Memory Matters for AI Agents

AI agents are becoming the primary interface for enterprise intelligence. They answer questions, complete tasks, and make recommendations. Their effectiveness depends entirely on the information they can access.

The Context Problem

Most AI agents today operate with severe context limitations:

They can read documents but not see demonstrations

They can analyze transcripts but not observe expressions

They can process text but not understand video

This means agents are making decisions based on a fraction of available information. The richest context in your organization, video, remains invisible to them.

What Changes with Video Memory

When video becomes queryable memory, agents gain:

Visual grounding: Answers based on what actually happened, not just what was written

Temporal context: Understanding sequences, causes, and effects over time

Multimodal reasoning: Combining text, audio, and visual information

Episodic recall: Remembering specific experiences, not just facts

The Impact of Video Intelligence

Organizations with video-enabled AI see 156% improvement in insight accuracy (Gartner, 2025)

Decision-making speed increases 67% when agents can access video context (McKinsey)

Customer understanding improves 89% compared to transcript-only analysis (Forrester)

Training effectiveness increases 52% with video-based knowledge capture (Deloitte)

82% of enterprise data will be video by 2027 (Cisco Visual Networking Index)

The Technical Architecture of Video Memory

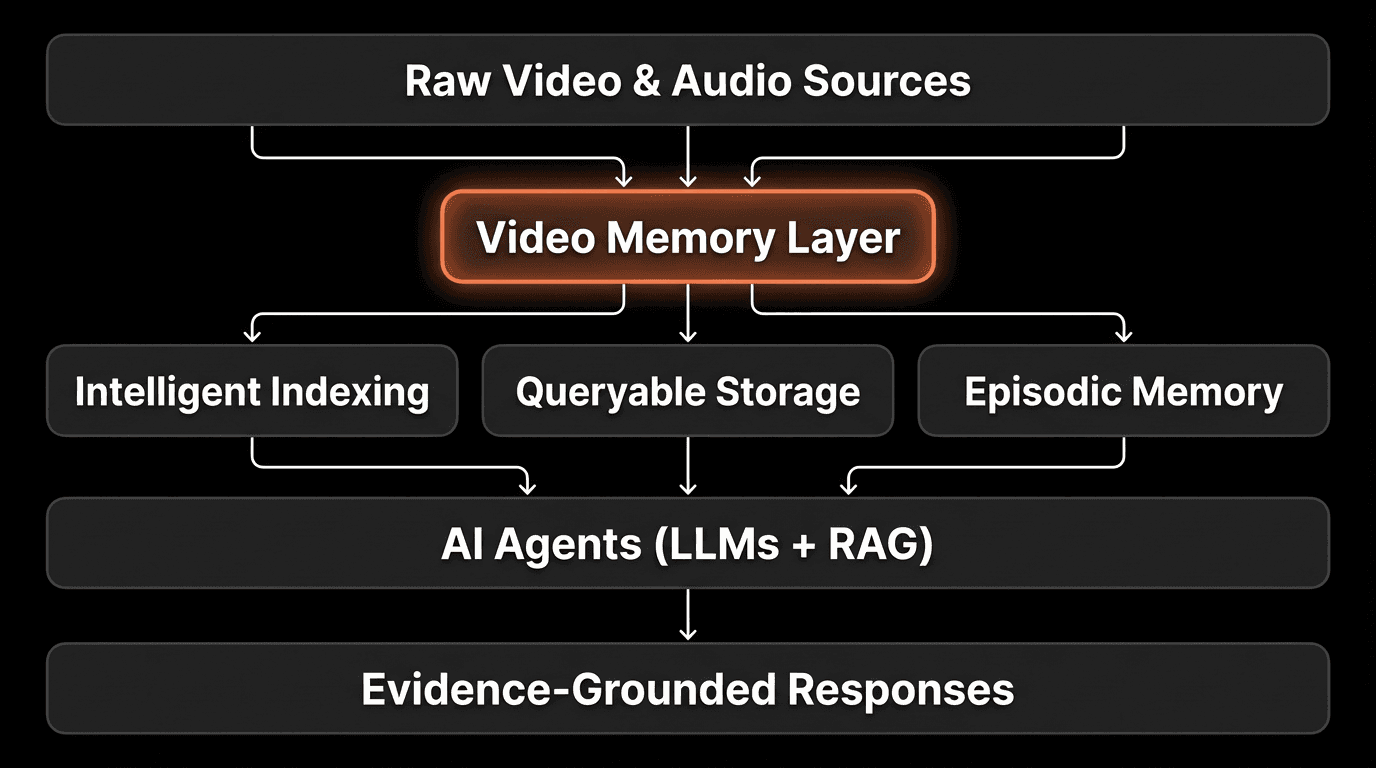

Transforming video files into video memory requires specialized infrastructure. This is not simply adding video to existing systems. It is a new architectural layer.

Component 1: Intelligent Indexing

Video must be analyzed and indexed at multiple levels:

Visual content: Objects, faces, actions, scenes, text on screen

Audio content: Speech, speaker identity, tone, music, sounds

Temporal markers: When events occur and how they relate in sequence

Semantic meaning: What the content is about, not just what it contains

Component 2: Queryable Storage

Indexed video must be stored in a way that enables efficient retrieval:

Semantic search: Find content by meaning, not just keywords

Temporal queries: Retrieve before/after relationships

Cross-modal search: Query in text, retrieve in video

Efficient access: Return specific moments, not entire files

Component 3: Agent Integration

Video memory must connect seamlessly to AI agents:

MCP (Model Context Protocol) compatibility for standardized access

Streaming support for real-time applications

Context formatting that LLMs can reason over

Efficient token usage, sending only relevant content

This is exactly what platforms like VideoDB provide: the infrastructure layer that transforms video from static files into queryable, intelligent memory for AI systems.

From Retrieval to Recall: What Makes It Memory

There is an important distinction between retrieval and memory. Retrieval finds content. Memory reconstructs experience.

Video Retrieval

Traditional video search finds clips that match a query. You search for "meeting about budget" and get a list of videos with budget-related content. You still need to watch them to understand what happened.

Video Memory

Video memory enables agents to recall experiences. The agent can answer "What concerns were raised about the budget in last week's meeting?" by accessing the specific moment, understanding the context, and synthesizing an answer without requiring you to watch anything.

This is the difference between a filing cabinet and a knowledgeable colleague. Both can find information. Only one can understand and explain it.

"The shift from search to memory is fundamental. Search requires the human to interpret results. Memory enables the system to interpret and respond. For AI agents to be truly useful, they need memory, not just retrieval."

— Perspective aligned with ideas shared by Dario Amodei, CEO of Anthropic

Practical Applications: Video Memory in Action

Sales Intelligence

The Problem: Sales teams have recordings of every call but cannot systematically learn from them. Reviewing calls takes hours. Insights remain anecdotal.

With Video Memory: AI agents answer questions like "Show me how top performers handle price objections" or "What do successful demos have in common?" The video library becomes an intelligence resource, not just an archive.

Customer Success

The Problem: Support interactions are recorded but siloed. When a customer escalates, the history is scattered across tickets and call logs.

With Video Memory: The agent recalls the entire customer journey: "This customer has had three support calls. In the first two, they expressed frustration with onboarding. In the last call, they mentioned considering alternatives." Context flows across interactions.

Training and Enablement

The Problem: Training videos are watched once and forgotten. New hires cannot easily find answers to specific questions.

With Video Memory: The agent answers "How do I handle the setup for enterprise clients?" by finding and presenting the exact segment from training materials, with full visual context.

Meeting Intelligence

The Problem: Meetings are recorded but rarely reviewed. Decisions and commitments are lost.

With Video Memory: The agent tracks continuity across meetings: "Last week, the team committed to delivering the prototype by Friday. In this week's meeting, there was no update on that commitment." Organizational memory persists.

Business Outcomes

Sales teams with video memory shorten ramp time by 43% (Sales Enablement Society)

Customer retention improves 37% with full-context support interactions (Forrester)

Training costs decrease 52% when video becomes searchable knowledge (Brandon Hall Group)

Meeting follow-through improves 78% with AI-tracked commitments (Harvard Business Review)

5 Steps to Transform Video into Memory

1. Audit Your Video Assets

Identify where video is created and stored in your organization. Map the types (meetings, calls, training), volumes, and current access patterns. Most organizations discover they have far more valuable video than they realized.

2. Prioritize High-Value Use Cases

Start with video that has clear intelligence value: sales calls, customer interactions, or training content. These use cases demonstrate ROI quickly and build organizational confidence for expansion.

3. Implement Video Memory Infrastructure

Deploy a platform designed for video intelligence, such as VideoDB. The infrastructure should handle indexing, storage, querying, and agent integration as a unified system.

4. Connect to AI Agents

Integrate video memory with your AI systems using standard protocols. Existing agents gain the ability to see and recall video content without requiring custom development.

5. Expand and Iterate

As initial use cases prove value, extend video memory across the organization. The infrastructure scales, and the compound value of accumulated video intelligence grows over time.

Expert Perspectives

"We're at an inflection point. The organizations that figure out how to make video truly accessible to AI will have a decade of advantage. Video contains information that text never can. Making it queryable is the unlock."

— Perspective aligned with ideas shared by Andrew Ng, Founder of DeepLearning.AI

"AI memory is not about storage. It is about intelligent access. Video memory infrastructure solves the access problem, transforming archives into active intelligence resources."

— Perspective aligned with ideas shared by Fei-Fei Li, Professor at Stanford University

Conclusion: The Infrastructure Opportunity

Video is the richest data source most organizations possess. It captures the full context of human interaction in ways that text and structured data cannot. Yet for AI systems, video has remained invisible.

The shift from video files to video memory changes this fundamentally. When video becomes queryable data, AI agents gain:

Access to the complete context of organizational knowledge

The ability to recall specific experiences, not just retrieve documents

Visual and temporal understanding that text cannot provide

True memory that compounds in value over time

Platforms like VideoDB provide the infrastructure layer that makes this transformation possible. By treating video as structured, queryable data, they enable a new category of AI capability.

The question for organizations is not whether video intelligence matters. It is how quickly they can build the infrastructure to unlock it. The video is already there. The capability to understand it is now available. What remains is the decision to connect them.

FAQs

Q: How is this different from video transcription?

A: Transcription captures words. Video memory captures everything: visual content, facial expressions, gestures, context, timing, and the relationships between moments. It is the difference between reading a script and watching the performance.

Q: What about cost? Isn't video processing expensive?

A: Traditional frame-by-frame processing is expensive. Video memory infrastructure uses intelligent indexing and selective retrieval, processing moments rather than entire files. Costs are typically 85-90% lower than brute-force approaches, making video intelligence practical at scale.

Q: How does privacy work with video memory?

A: Enterprise video memory platforms include: role-based access controls, automatic PII detection and redaction, consent management, encrypted storage, audit logging, and compliance with GDPR, CCPA, and industry regulations. Privacy is built into the architecture.

Q: Can this work with existing video archives?

A: Yes. Video memory platforms can ingest existing recordings and index them for intelligent access. The value of your archive increases immediately because content that was difficult to find becomes searchable and queryable.

Q: How does this integrate with current AI tools?

A: Modern video memory platforms like VideoDB support standard protocols like MCP (Model Context Protocol), enabling integration with any LLM or agent framework. Your existing AI investments gain video capabilities through standardized connections.

Q: What types of video work best?

A: Any video with information value: meetings, sales calls, support interactions, training content, product demos, user research sessions, and more. The common thread is video that contains knowledge worth accessing more than once.

Key Takeaways

Video is trapped in files: Most organizational video is invisible to AI, locked in formats that only humans can access through manual viewing.

Video as data transforms capability: When video becomes queryable, agents can answer questions about visual content, not just text.

Memory differs from retrieval: True video memory enables AI to recall and reason over experiences, not just find matching clips.

The business impact is substantial: Organizations report 156% improvement in insight accuracy and 67% faster decision-making with video intelligence.

Infrastructure is the enabler: Purpose-built platforms like VideoDB provide the indexing, storage, and query capabilities that video memory requires.

Privacy is built in: Enterprise-grade video memory includes comprehensive access controls, encryption, and compliance features.

Integration is standardized: MCP and other protocols enable video memory to connect with existing AI systems.

The value compounds: Unlike static archives, video memory becomes more valuable as more content is indexed and more patterns are discovered.