Apr 17, 2026

Harmonizing AI: Mastering Synchronized Audio-Visual Content Generation

Explore AI-driven synchronized audio-visual content generation, focusing on techniques, applications, and challenges in creating cohesive multimodal experiences.

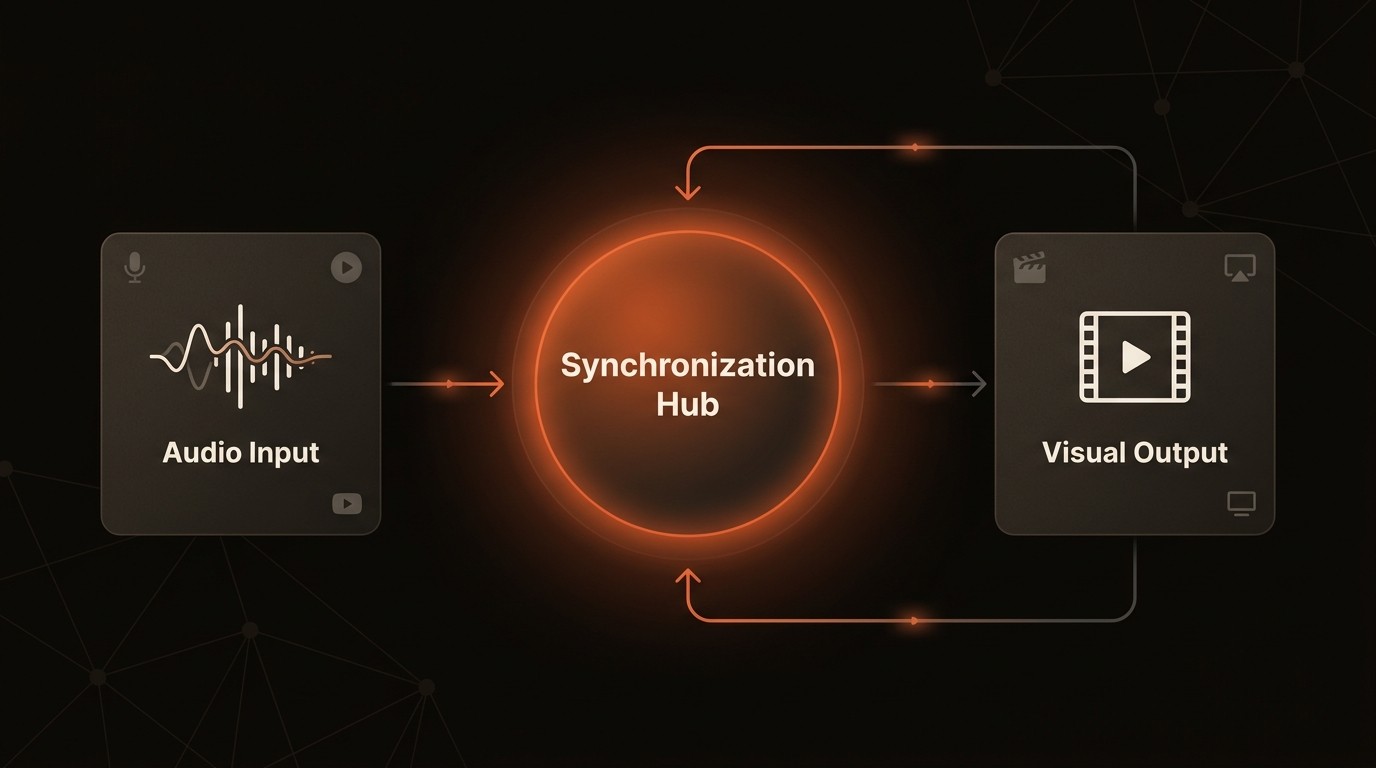

The Art of Synchronization: AI in Audio-Visual Content

In the realm of digital content, the demand for AI content creation is projected to increase by 30% annually over the next five years, according to Gartner. This surge is driven by the need for more engaging and interactive experiences, particularly in fields like film, gaming, and virtual reality. The core challenge lies in achieving seamless synchronization between audio and visual elements, a task that requires precise temporal alignment and content coherence.

The intricacies of synchronized audio-visual generation are not just technical hurdles but also creative challenges. Developers and researchers are tasked with ensuring that the generated content not only aligns temporally but also maintains semantic coherence. This is crucial for creating immersive experiences that captivate audiences and enhance user engagement.

Advancements in deep learning have significantly improved the quality and coherence of generated audio-visual content. However, the journey to mastering synchronized content generation is fraught with challenges, from maintaining temporal consistency to ensuring that the audio and visual elements complement each other effectively. As we delve into the techniques and applications of this technology, it becomes clear that the future of content creation is not just about automation but about harmonizing AI to create truly cohesive multimodal experiences.

Challenges in Synchronized Audio-Visual Generation

One of the primary challenges in synchronized audio-visual generation is maintaining temporal consistency. This involves ensuring that audio and visual elements are perfectly aligned in time, which is crucial for creating a seamless user experience. For instance, in a virtual reality application, any lag between audio and visual cues can disrupt the user's immersion, leading to a less engaging experience.

Another significant challenge is achieving semantic coherence between the modalities. This means that the audio and visual components must not only be synchronized in time but also convey a unified message. In film production, for example, a mismatch between the soundtrack and the visual narrative can lead to confusion and diminish the emotional impact of a scene.

The complexity of multimodal AI models also presents a challenge. These models must be capable of processing and synchronizing diverse data types, such as audio signals and video frames, in real-time. This requires sophisticated algorithms and significant computational resources, which can be a barrier for smaller developers and researchers.

Finally, the integration of AI-driven tools into existing content creation workflows can be challenging. Many traditional workflows are not designed to accommodate the dynamic nature of AI-generated content, requiring significant adjustments and retraining of personnel. This can lead to increased costs and delays in production, impacting the overall efficiency of the content creation process.

Understanding the Technology Behind Synchronized Content

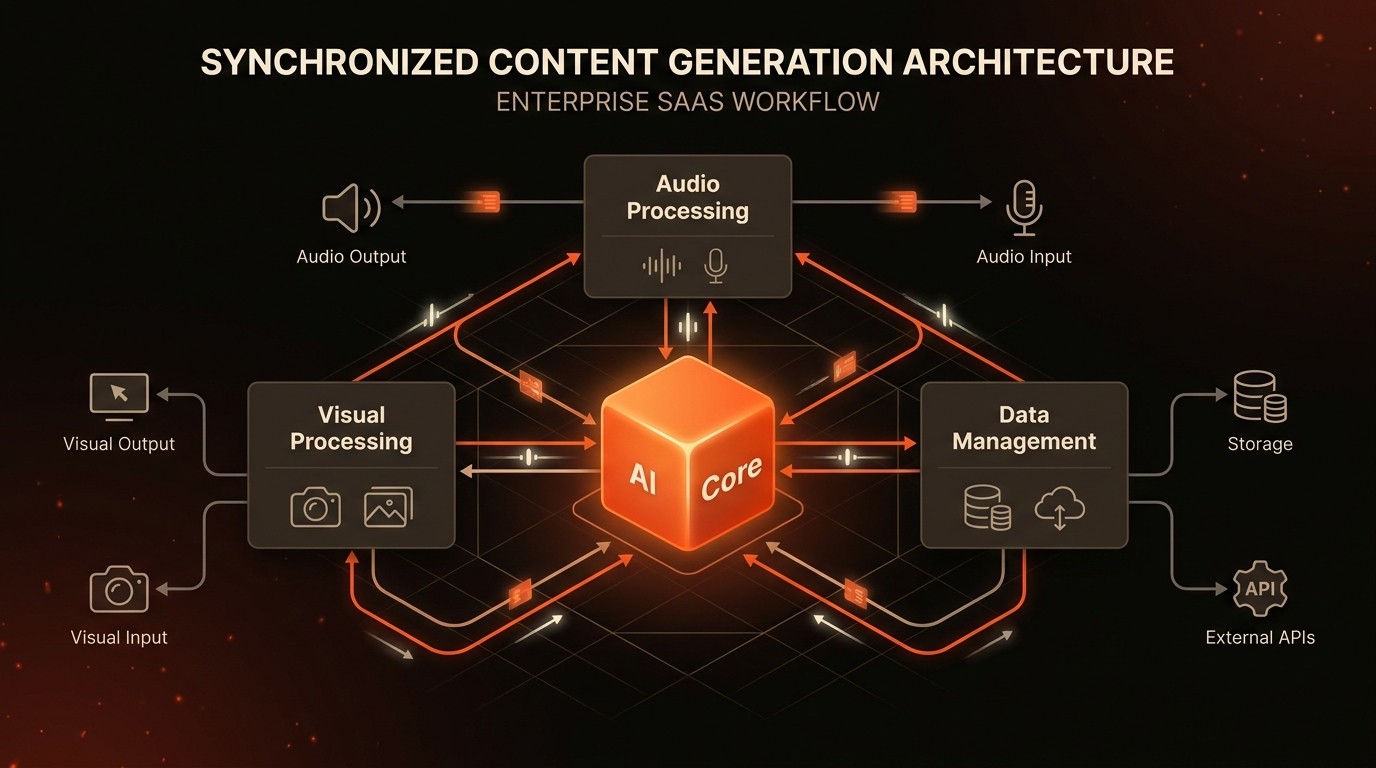

Deep Learning and Generative Models

Deep learning forms the backbone of synchronized audio-visual content generation. By leveraging neural networks, these models can learn complex patterns in data, enabling them to generate realistic audio and visual content. Generative models, such as GANs (Generative Adversarial Networks), are particularly effective in this domain, as they can create high-quality content by learning from vast datasets.

Multimodal Learning

Multimodal learning is a critical component of synchronized content generation. It involves training AI models to process and integrate multiple types of data, such as audio and visual inputs. This approach allows for more comprehensive understanding and generation of content, as the models can draw on information from multiple sources to create a cohesive output. For more on this, see Multimodal Learning.

Audio Signal Processing

Audio signal processing is essential for generating synchronized audio content. This involves analyzing and manipulating audio signals to ensure they align with the visual elements. Techniques such as spectral analysis and waveform synthesis are commonly used to achieve this synchronization. For further details, refer to Audio Processing.

Role of VideoDB

VideoDB plays a crucial role in managing and indexing the vast amounts of data involved in synchronized content generation. By providing a robust database infrastructure, VideoDB enables efficient storage and retrieval of audio-visual data, facilitating the seamless integration of AI-driven tools into content creation workflows.

By the Numbers

Here's what the data reveals:

Metric | Current State | Impact |

|---|---|---|

AI content demand | 30% annual increase | Expanding market opportunities |

Deep learning advancements | Significant improvements | Enhanced content quality |

Use in film and gaming | Increasing automation | Streamlined production processes |

User engagement in VR/AR | Enhanced by synchronization | Improved user experiences |

Efficiency of multimodal models | Increasing | Better data processing |

Mastering the Art of Synchronization

Temporal Alignment Techniques

Achieving precise temporal alignment is crucial for synchronized audio-visual content. Techniques such as dynamic time warping and cross-modal attention mechanisms are employed to ensure that audio and visual elements are perfectly synchronized. These methods allow for real-time adjustments, ensuring that the content remains cohesive even as it evolves.

Semantic Coherence Strategies

Maintaining semantic coherence involves ensuring that the audio and visual components convey a unified message. This can be achieved through techniques such as cross-modal embeddings, which allow the AI to understand and generate content that is semantically aligned. For example, in a gaming scenario, this ensures that sound effects and visual cues are perfectly matched, enhancing the player's immersion.

Integration with VideoDB

Integrating AI-driven tools with VideoDB can streamline the content creation process. By leveraging VideoDB's robust database infrastructure, developers can efficiently manage and retrieve audio-visual data, facilitating seamless synchronization. This integration reduces the time and effort required to produce high-quality content, allowing creators to focus on the creative aspects of their projects.

Practical Application Example

Consider a virtual reality training simulation for medical professionals. By using synchronized audio-visual content, the simulation can provide realistic scenarios that enhance learning outcomes. The precise alignment of audio cues with visual demonstrations ensures that trainees receive a comprehensive understanding of procedures, improving their skills and confidence.

In Practice: Real-World Applications

Film and Animation

In the film and animation industry, synchronized audio-visual content is revolutionizing the production process. By automating the synchronization of soundtracks with visual sequences, filmmakers can streamline post-production workflows. This not only reduces costs but also enhances the creative possibilities, allowing directors to experiment with more complex audio-visual narratives.

Gaming

The gaming industry is leveraging synchronized content to create more immersive experiences. By aligning sound effects and visual cues, developers can enhance the realism of their games, drawing players deeper into the virtual worlds. This synchronization is particularly crucial in fast-paced action games, where split-second timing can make the difference between victory and defeat.

Virtual and Augmented Reality

In virtual and augmented reality applications, synchronized audio-visual content is essential for creating engaging user experiences. By ensuring that audio cues are perfectly aligned with visual elements, developers can enhance the sense of presence and immersion. This is particularly important in training simulations, where realistic scenarios can significantly improve learning outcomes.

Industry Voices

Andrew Ng, Founder of Landing AI, has discussed the growing importance of multimodal AI in building more intuitive and interactive user experiences, particularly as systems integrate multiple data types such as text, audio, and visual inputs.

Fei-Fei Li, Professor at Stanford University, has emphasized the importance of advancing multimodal AI systems, where challenges such as maintaining temporal consistency and semantic coherence across modalities remain key research areas.

Getting Started with Synchronized Content Generation

To successfully implement synchronized audio-visual content generation, follow these steps:

Audit Current Workflows: Begin by identifying the most time-consuming processes in your current content creation workflows. Document processing times and error rates to establish baseline metrics for comparison after implementation.

Select Appropriate Tools: Choose AI-driven tools that are compatible with your existing infrastructure. Consider integrating VideoDB to manage and index your audio-visual data efficiently.

Train Multimodal Models: Invest in training multimodal AI models that can process and synchronize diverse data types. This will ensure that your content remains cohesive and engaging.

Test and Iterate: Conduct thorough testing to ensure that your synchronized content meets quality standards. Use feedback from users to make iterative improvements, enhancing the overall user experience.

Monitor and Optimize: Continuously monitor the performance of your synchronized content generation processes. Use analytics to identify areas for optimization, ensuring that your workflows remain efficient and effective.

FAQ

Q: What is the difference between traditional and AI-driven content creation?

A: Traditional content creation relies heavily on manual processes, which can be time-consuming and prone to errors. AI-driven content creation automates these processes, resulting in faster production times and higher quality outputs.

Q: How does synchronized audio-visual content enhance user engagement?

A: By ensuring that audio and visual elements are perfectly aligned, synchronized content creates a more immersive experience. This enhances user engagement by making interactions more intuitive and realistic.

Q: What are the key challenges in implementing synchronized content generation?

A: The main challenges include maintaining temporal consistency, achieving semantic coherence, and integrating AI-driven tools into existing workflows. Overcoming these challenges requires sophisticated algorithms and significant computational resources.

Q: How can VideoDB assist in synchronized content generation?

A: VideoDB provides a robust database infrastructure for managing and indexing audio-visual data. This facilitates the seamless integration of AI-driven tools, streamlining the content creation process.

Q: What industries benefit most from synchronized audio-visual content?

A: Industries such as film, gaming, and virtual reality benefit significantly from synchronized content. It enhances the quality and engagement of their products, leading to improved user experiences and outcomes.

Key Takeaways

AI content demand is projected to increase by 30% annually.

Deep learning advancements enhance content quality and coherence.

Multimodal AI models improve data processing and synchronization.

VideoDB facilitates efficient management of audio-visual data.

Synchronized content enhances user engagement in VR/AR applications.