Apr 17, 2026

Beyond Text: A Technical Guide to Multimodal RAG Systems

Explore multimodal RAG, the advanced AI technique integrating text, images, and video. A technical guide for AI engineers on its architecture and applications.

The Next Frontier: From Language Models to Perception Models

The generative AI landscape is moving at an unprecedented pace, but for all their fluency, Large Language Models (LLMs) have a fundamental limitation: they experience the world through a single sense-text. This textual constraint creates a gap between the model's understanding and our rich, multisensory reality. The multimodal AI market, projected to hit $4.5 billion by 2028 with a staggering 35% CAGR, signals a clear industry shift toward closing this gap. The demand is not just for AI that can write, but for AI that can see, listen, and comprehend information from a variety of formats, just as humans do. This is where the next evolution of information retrieval and generation is taking place.

Enter Multimodal Retrieval-Augmented Generation (RAG), a sophisticated architecture designed to give generative models the eyes and ears they've been missing. Instead of relying solely on its internal, static training data, a multimodal RAG system dynamically retrieves relevant information from a vast corpus of text, images, audio files, and even video clips before generating a response. This process grounds the model in real-time, factual, and multi-format data, drastically reducing hallucinations and increasing the relevance and accuracy of its outputs. It transforms the LLM from a pure language processor into a more comprehensive perception and reasoning engine.

For AI engineers and developers, this transition represents a significant architectural challenge and a massive opportunity. Building these systems requires moving beyond text-based vector search to a world of multimodal embeddings and cross-modal retrieval. It involves designing data pipelines that can handle the unique characteristics of unstructured image and video data, and orchestrating complex interactions between retrieval systems and generative models. Mastering this domain is no longer a niche specialty; it is becoming a core competency for anyone building next-generation AI applications that need to interact with the world in a truly meaningful way.

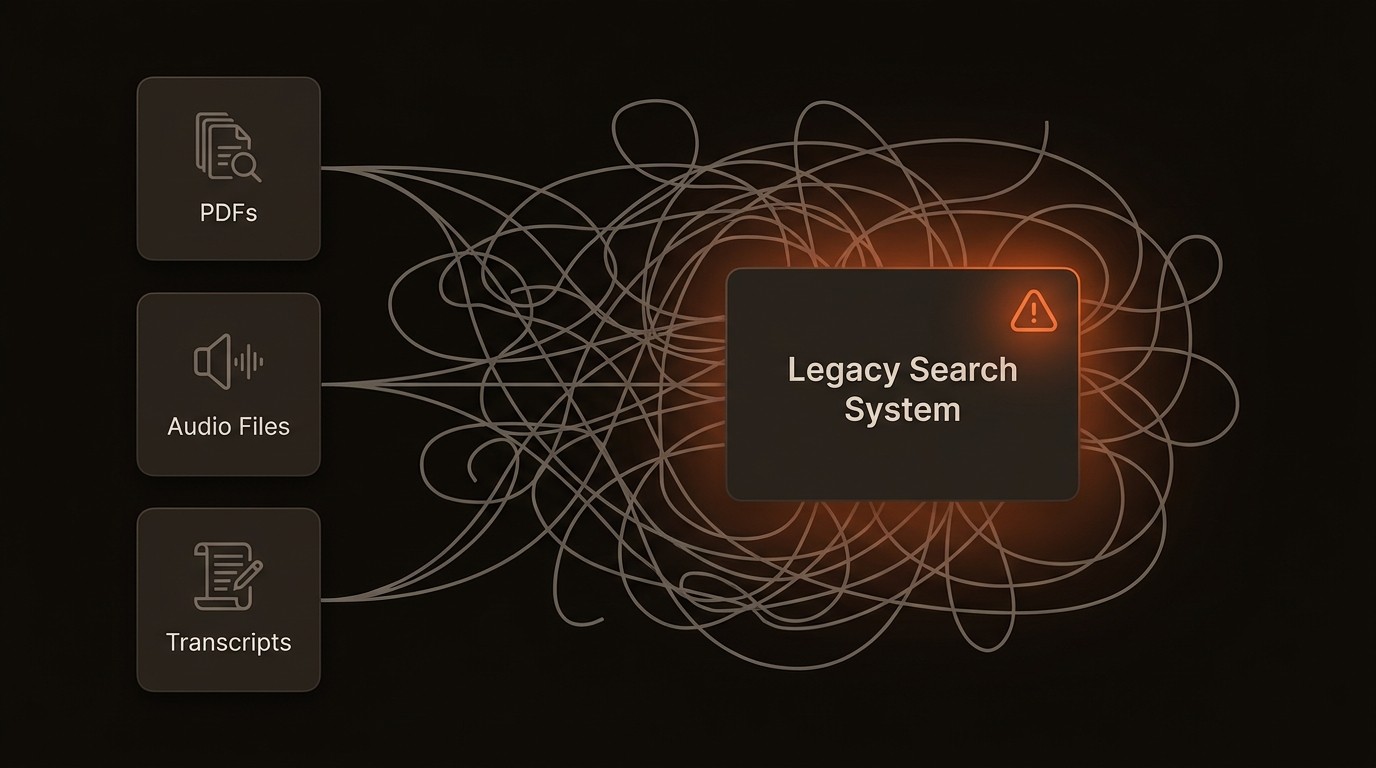

The Bottlenecks of a Text-Only World

While standard RAG has been effective at grounding LLMs in factual text, its single-modality focus creates significant bottlenecks in real-world enterprise environments. The data we rely on is inherently multimodal, and ignoring this reality leads to incomplete, inefficient, and often inaccurate AI systems. The consequences are not just theoretical; they manifest as tangible operational friction and missed opportunities. Relying on text-only systems in a multimedia world is like trying to understand a movie with your eyes closed-you get the dialogue, but you miss the entire visual context that gives it meaning.

One of the most critical limitations is contextual blindness to visual and auditory data. Consider an engineering team troubleshooting a hardware failure. The solution might be contained in a schematic diagram, a photo of a faulty component sent via a support ticket, or a video tutorial demonstrating the fix. A text-only RAG system is blind to this information. It can search through manuals and text-based reports, but it cannot "see" the diagram or "watch" the video. This forces engineers to manually bridge the gap, wasting valuable time searching across disparate, non-indexed visual asset repositories, slowing down resolution times and increasing operational costs.

Furthermore, this single-modality approach perpetuates data silos. An enterprise's knowledge base is a complex tapestry of documents, presentations, spreadsheets, video meetings, and code repositories. A text-based search might retrieve a project proposal but miss the crucial architectural diagram embedded within a slide deck or the verbal consensus reached in a recorded Zoom call. This failure to connect related information across formats means that the AI's understanding is fragmented. As a result, productivity suffers because employees cannot get a holistic view of a project or topic from a single query, forcing them to piece together information from multiple, disconnected sources.

Finally, the reliance on text alone leads to a shallow level of understanding. In fields like healthcare, a patient's story is told through both clinical notes (text) and medical imagery like X-rays or MRIs. A text-only system can analyze the notes but cannot correlate them with the visual evidence in the scans, missing critical diagnostic cues. Multimodal RAG systems, by contrast, can analyze medical images and patient records together to provide more accurate insights. This deeper, cross-modal analysis is essential for high-stakes applications where accuracy and completeness are non-negotiable. The inability to process and correlate multimodal data is a direct barrier to building truly intelligent and reliable AI.

The Architectural Blueprint of Multimodal RAG

To overcome the limitations of text-only systems, multimodal RAG introduces a more sophisticated architecture designed to process and synthesize information from diverse data types. At its core, the system works by creating a unified representation for all data, enabling seamless search and synthesis across different formats. This process relies on three key technological pillars: multimodal embeddings, cross-modal retrieval, and the final generative component. Understanding how these pieces fit together is crucial for any engineer looking to build these powerful systems.

Multimodal Embeddings: The Universal Language

The foundational technology of any multimodal system is the multimodal embedding model. These are sophisticated neural networks, such as Google's Gemini, trained to map data from different modalities-like a paragraph of text, a photograph, or a snippet of audio-into a shared high-dimensional vector space. In this space, the semantic meaning of the data is captured as a vector of numbers. The critical innovation is that semantically similar concepts, regardless of their original format, are positioned close to each other. For example, the text description "a golden retriever catching a frisbee in a park" will have a vector representation that is very close to the vector for an actual image of that scene. This creates a universal language that the AI can use to compare and relate disparate data types.

Cross-Modal Retrieval: Finding the Right Data

Once data is converted into these universal embeddings, the next step is retrieval. This is where cross-modal retrieval comes into play, powered by a specialized vector database. When a user issues a query (which can itself be multimodal, like an image and a text question), the system first converts the query into an embedding. It then uses the vector database to perform a similarity search across its entire index of multimodal vectors. Because of the shared embedding space, a text query can retrieve relevant images, an image query can find related documents, and so on. This is far more powerful than traditional keyword search, as it retrieves based on conceptual meaning rather than literal term matching. For instance, querying for "vehicle safety features" could retrieve text documents about airbags, as well as diagrams illustrating crumple zones.

The Generative Component: Synthesizing Insights

The final stage is generation. The top-ranked multimodal data retrieved from the vector database-a mix of text snippets, images, and other formats-is compiled into a rich context. This context is then passed along with the original query to a large language model. The LLM uses this grounded, multi-format information to synthesize a comprehensive and accurate answer. For example, if a user asks, "How do I replace the battery in this device?" and uploads a photo, the RAG system can retrieve a relevant text manual, a specific diagram, and a timestamped link to a video tutorial. The LLM then combines these pieces into a clear, step-by-step response that might include both text instructions and references to the retrieved visual aids. Models with large context windows, like Gemini Embedding 2 which supports up to 8192 input tokens, are particularly adept at handling this rich, combined context.

Here is a simplified representation of the workflow:

Multimodal AI by the Numbers

Here's what the data reveals about the rapid growth and technical capabilities shaping the multimodal RAG landscape:

Metric | Projection / Finding | Significance for Engineers |

|---|---|---|

RAG Market Growth | Projected to grow from USD 1.85B (2025) to USD 13.63B (2030) | Indicates massive investment and career opportunities in RAG-related skills. |

Multimodal AI Market Growth | Expected to reach $4.5 billion by 2028, growing at a 35% CAGR | Signals that multimodal capabilities are becoming a standard expectation, not a niche feature. |

System Accuracy | Multimodal RAG enhances accuracy and relevance over single-modality systems | Combining text and images provides more robust context, reducing model hallucination. |

LLM Context Capacity | Gemini Embedding 2 supports up to 8192 input tokens | Larger context windows allow for more retrieved multimodal evidence to be included in the prompt. |

Core Application | Systems can analyze medical images and patient records for insights | Demonstrates the high-stakes, real-world impact of fusing visual and textual data. |

Enterprise Productivity | Can retrieve documents, presentations, or diagrams to improve search | Directly addresses the pain point of siloed, multi-format enterprise knowledge. |

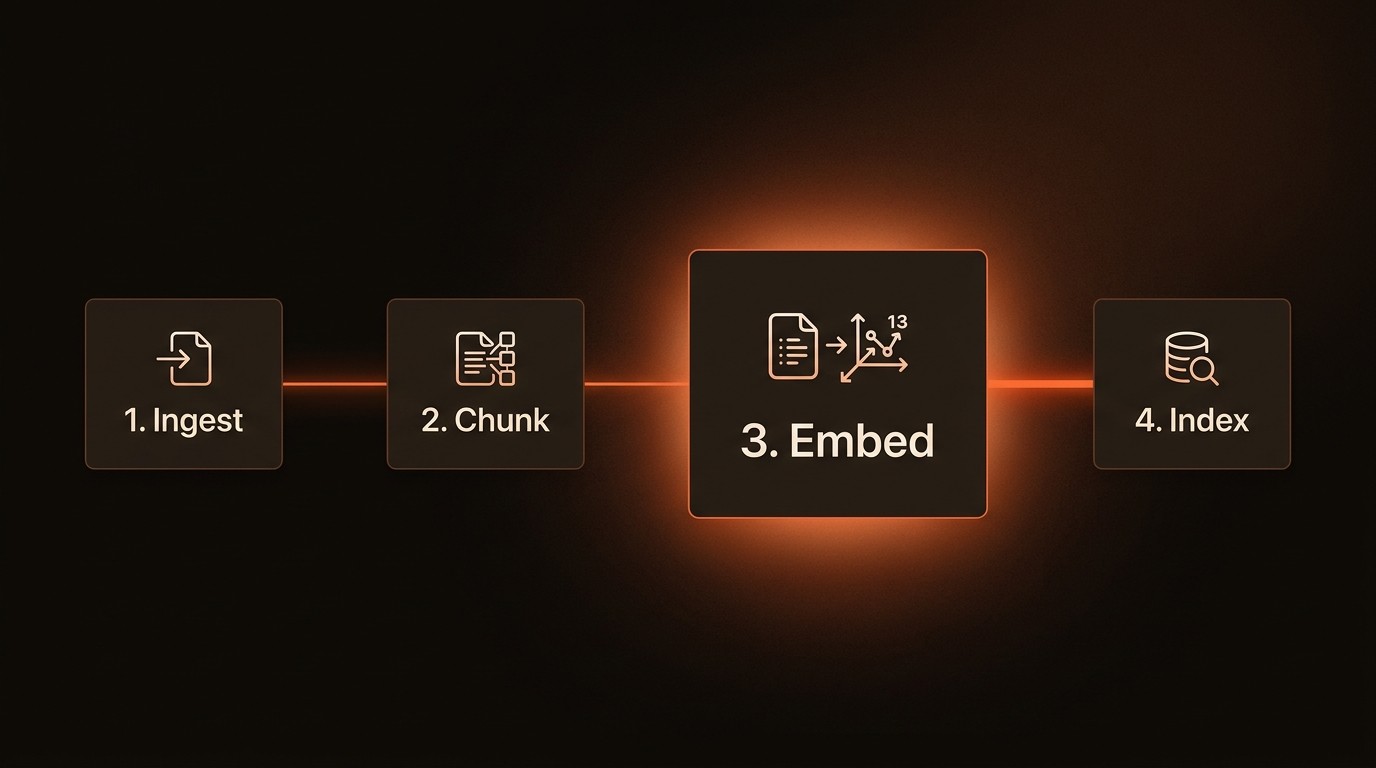

Building a Robust Multimodal RAG Pipeline

Architecting a production-grade multimodal RAG system requires a thoughtful approach that extends beyond simple vector similarity search. It involves creating a resilient data ingestion pipeline, implementing sophisticated retrieval logic, and carefully crafting the final prompt for the generative model. Success depends on mastering each stage of this complex workflow to ensure the final output is not only relevant but also accurate and reliable. This is where deep engineering expertise comes into play, transforming a promising concept into a functional and scalable application.

Unified Data Ingestion and Embedding

The first step is to process and embed your diverse corpus of data. This is more than just a one-off script; it's a continuous data pipeline. You need different pre-processing steps for each modality: text needs cleaning and chunking, images need resizing and normalization, and video requires frame extraction or audio transcription. For video content, a platform like VideoDB can be invaluable, handling the complex tasks of decoding, chunking, and preparing video streams for embedding. Once pre-processed, you feed the data into a multimodal embedding model to generate the vectors. The key challenge here is consistency and scalability. Your pipeline must be able to handle large volumes of data and be robust enough to manage different file formats and potential corruption without failing.

Advanced Retrieval Strategies

Simple vector search is a good start, but state-of-the-art systems use more advanced retrieval techniques. One powerful approach is hybrid search, which combines the semantic power of vector search with the precision of traditional keyword search. This is useful for queries containing specific identifiers like product SKUs or proper nouns. Another key technique is re-ranking. The initial retrieval from the vector database might return hundreds of potentially relevant results. A secondary, more computationally expensive re-ranking model can then analyze the top candidates in more detail to promote the most relevant items to the very top. This multi-stage process ensures both speed and high precision, which is critical for user-facing applications.

Contextual Fusion and Generation

How you present the retrieved multimodal data to the LLM is a critical and often overlooked step. Simply concatenating all the retrieved text and adding links to images is inefficient. As Amr Awadallah, founder & CEO of Vectara, noted, stuffing the context window with irrelevant or noisy information can cause the model to struggle. A better approach is contextual fusion. This involves summarizing retrieved text, generating captions for images, and creating a structured prompt that clearly delineates each piece of evidence. For example, you might format the context with clear markdown headers for each retrieved document or image, helping the LLM navigate the information more effectively. This stage is an art as much as a science and often requires significant iteration and prompt engineering to perfect.

A conceptual Python snippet for a multimodal query might look like this:

Multimodal RAG in Practice: From Healthcare to the Enterprise

The theoretical power of multimodal RAG translates into tangible value across numerous industries. By enabling AI to perceive and reason with a richer, more complete set of information, these systems are solving complex problems that were previously intractable for text-only models. The applications range from high-stakes medical diagnostics to enhancing everyday enterprise productivity, demonstrating the versatility and impact of this technology.

Advanced Medical Diagnostics

In healthcare, diagnostic accuracy is paramount. A radiologist's workflow often involves analyzing medical images, such as CT scans or X-rays, while cross-referencing the patient's electronic health record (EHR), which contains text-based clinical notes, lab results, and medical history. A multimodal RAG system can supercharge this process. When a physician queries about a specific patient's scan, the system can retrieve not only similar images from a medical archive but also relevant excerpts from the patient's history and the latest medical journals. The generative model can then synthesize this information to highlight potential areas of concern, suggest differential diagnoses, or cite recent research relevant to the visual findings, acting as a powerful assistant to the human expert.

Intelligent Enterprise Search

Modern enterprises run on a dizzying array of data formats: reports in PDFs, financial models in spreadsheets, strategy plans in slide decks, and project discussions in video recordings. Traditional enterprise search engines struggle to connect these dots. A multimodal RAG-powered search engine transforms this experience. An employee could ask, "What was our Q3 strategy for the 'Phoenix' project and show me the final design mockup?" The system would understand the query, retrieve the relevant section from the Q3 strategy presentation, pull key financial projections from an Excel file, and find the final JPEG mockup from a design folder. It would then present a synthesized answer combining all these elements, providing a complete picture in seconds instead of hours of manual searching.

Next-Generation Customer Support

Customer support is another area ripe for multimodal innovation. Imagine a customer trying to assemble a piece of furniture. They are stuck and frustrated. Instead of typing a long, confusing description of their problem, they simply take a photo of the half-assembled product and the confusing instruction step. They upload the image to a support chatbot and ask, "What am I doing wrong here?" The multimodal RAG system analyzes the image, identifies the components, retrieves the correct section of the assembly manual (including diagrams), and perhaps even finds a short video clip demonstrating that specific step. It then generates a response that includes clear text instructions, highlights the relevant part of the diagram, and provides a link to the video, resolving the issue quickly and accurately.

Industry Voices on the Multimodal Shift

Daniel Warfield, Senior Engineer at EyeLevel.ai, noted that Multimodal Retrieval-Augmented Generation has emerged as a unique approach to increase the efficiency and reliability of AI systems by incorporating various data types such as images, audio, and video, creating richer and more contextually accurate information retrieval and generation. (2024)

Jerry Liu, CEO and co-founder of LlamaIndex, highlighted in Towards AI the importance of understanding AI at different levels of abstraction, the critical role of data quality in RAG systems, and the unique ethical considerations that arise with multimodal AI. (2023)

Amr Awadallah, founder & CEO of Vectara, observed in the Turing Post that even the largest companies are still leveraging RAG despite larger context windows. He explained that if we just stuff a model's context window full of information, some of it irrelevant or noisy, the model actually struggles to perform well, reinforcing the need for precise retrieval. (2025)

Your First Five Steps to Implementing Multimodal RAG

Transitioning from concept to implementation requires a structured approach. Building a multimodal RAG system is a significant engineering effort, but by breaking it down into manageable steps, your team can build momentum and deliver value incrementally. The key is to start with a well-defined problem and iterate, rather than attempting to build an all-encompassing system from day one. Here is a practical roadmap to guide your first implementation.

Define a Focused, High-Value Use Case: Before writing any code, identify a specific business problem where multimodal data is a critical bottleneck. Don't try to build a general-purpose search engine. Instead, focus on a narrow task, such as a system for answering questions about product schematics or a tool for analyzing customer support tickets that include images. A narrow scope reduces complexity and makes it easier to measure success and demonstrate ROI.

Select Your Core Technology Stack: Your stack will consist of three main components: an embedding model, a vector database, and a generative model. Evaluate multimodal embedding models like Gemini or open-source alternatives based on their performance on your specific data types. Choose a vector database that is scalable and optimized for the high-dimensional vectors these models produce. Finally, select an LLM that has a large context window and strong instruction-following capabilities.

Build the Data Ingestion Pipeline: This is the foundational engineering work. Develop scripts and workflows to process your source data-text, images, audio, and video. This involves cleaning, chunking, and running the data through your chosen embedding model. For complex media like video, consider leveraging a specialized platform like VideoDB to handle the heavy lifting of decoding and frame extraction, allowing your team to focus on the core RAG logic.

Develop the Retrieval and Generation Logic: Start with a baseline retrieval strategy, such as k-Nearest Neighbor (k-NN) search in your vector database. Implement the logic that takes a user query, embeds it, retrieves the top-k results, and formats them into a prompt for the LLM. This is where you will spend most of your time experimenting. Test different prompting strategies to see how you can best present the multimodal context to the LLM to get the most accurate and helpful responses.

Test, Evaluate, and Iterate: Evaluation is critical. Develop a set of "golden" question-answer pairs based on your use case to serve as a benchmark. Measure the relevance of your retrieval results (precision and recall) and the quality of the final generated answers. Use this feedback to tune your system, whether that means adjusting your data chunking strategy, trying a different embedding model, or refining your prompts. Continuous iteration is the key to moving from a proof-of-concept to a production-ready system.

Common Questions About Multimodal RAG

Q: What is the main difference between multimodal RAG and standard text-based RAG?

A: The primary difference is the type of data they can handle. Standard RAG retrieves information solely from a text corpus to augment an LLM's context. Multimodal RAG expands this capability by ingesting, embedding, and retrieving from diverse data types including images, audio, and video, allowing the LLM to generate responses based on a much richer, more comprehensive understanding of the available information.

Q: How do multimodal embeddings actually work?

A: Multimodal embeddings are created by large neural networks trained on vast datasets containing paired data from different modalities (e.g., images and their text captions). Through this training, the model learns to create a shared "semantic space" where the vector for an image is mathematically close to the vector for its descriptive text. This allows for meaningful comparisons and retrieval across different data formats based on their underlying meaning, not just surface-level features.

Q: What are the biggest challenges in building a multimodal RAG system?

A: The top challenges are data quality, model selection, and system complexity. Ensuring high-quality, well-organized source data across all modalities is a significant undertaking. Selecting the right embedding and generative models that perform well on your specific domain can require extensive evaluation. Finally, the overall system architecture, from the ingestion pipeline to the retrieval and prompting logic, is inherently more complex than a text-only system.

Q: Is multimodal RAG expensive to implement?

A: The cost can vary significantly based on scale and approach. Key cost drivers include the computational expense of running embedding models (especially for large video files), the storage and query costs of a managed vector database, and the API costs for the generative LLM. However, starting with open-source models and a focused, small-scale use case can help manage initial costs, allowing teams to prove value before scaling up their investment.

Q: How does this technology affect the role of a vector database?

A: Multimodal RAG elevates the importance of the vector database from a simple storage layer to a critical component of the AI reasoning process. The database must not only store vectors efficiently but also perform ultra-fast, scalable similarity searches across billions of entries. Its performance directly impacts the retrieval speed and relevance of the entire RAG system, making it a core piece of infrastructure for any serious multimodal application.

Key Takeaways

The RAG market is projected to surge to USD 13.63 billion by 2030, with multimodal capabilities driving significant growth.

Multimodal RAG moves beyond text, integrating images, audio, and video to provide richer context and dramatically improve AI accuracy.

The core architecture relies on multimodal embeddings to create a universal language and a vector database for efficient cross-modal retrieval.

Real-world applications in healthcare, enterprise search, and customer support demonstrate the technology's tangible business impact.

Successful implementation requires a strategic approach focused on a specific use case, a robust data pipeline, and continuous iteration.