Apr 17, 2026

Unlock Insights: Mastering Semantic Video Search for Enhanced Understanding

Explore the power of semantic video search to unlock deep insights from your content. A technical guide for AI developers on moving beyond keywords to true video understanding.

The Unseen Library: Drowning in a Deluge of Video Data

By 2023, a staggering 82% of all internet traffic originated from video streaming and downloads, a figure projected to hold steady as video becomes the default medium for communication, entertainment, and knowledge transfer. This explosion has created a paradox of information. We have access to more visual data than ever before, yet our ability to navigate, understand, and extract value from it remains surprisingly primitive. The vast majority of this content sits in digital archives like an unindexed library-a collection of valuable stories and data points, effectively invisible and inaccessible to those who need them most. The core of the problem lies in how we search.

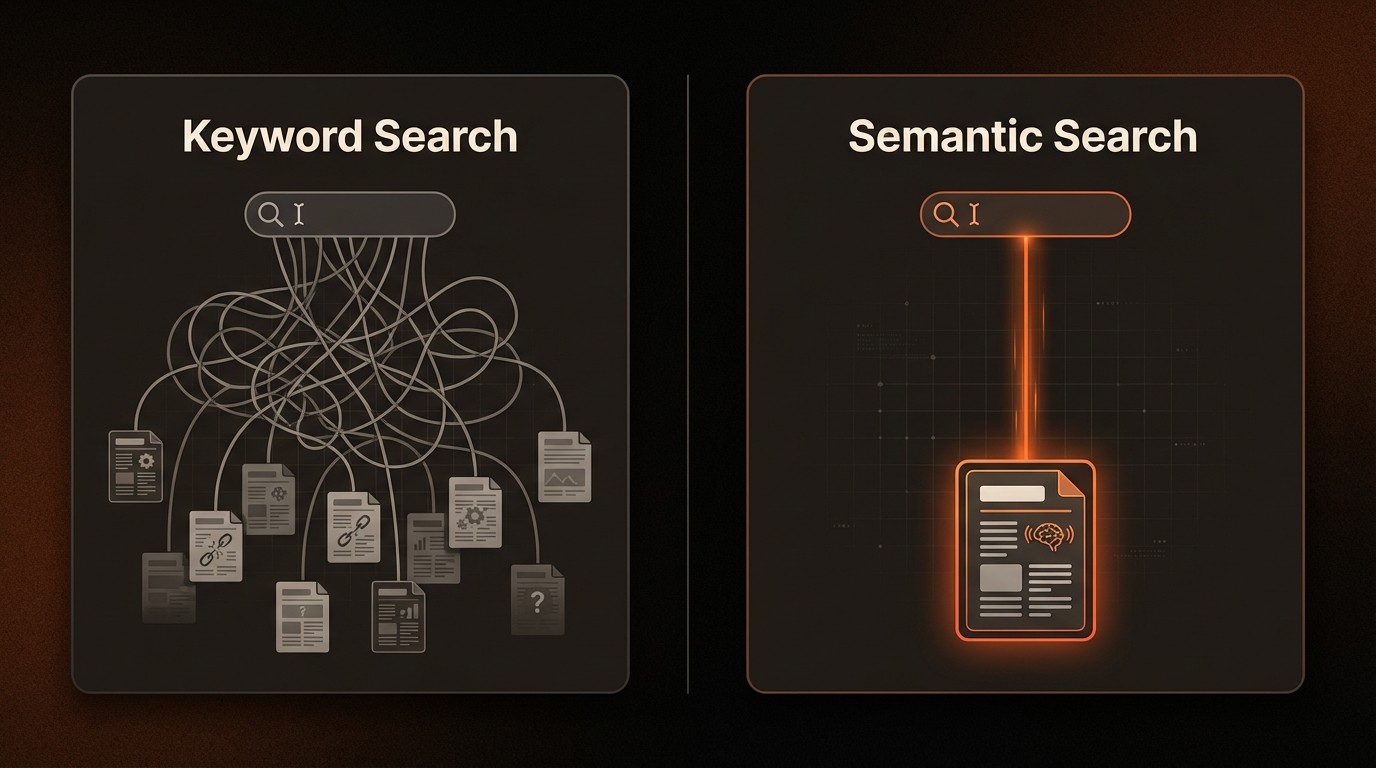

Traditional search methods, built on keywords and manually applied metadata, are fundamentally broken when applied to the fluid, context-rich nature of video. Imagine trying to find a specific three-second clip in a two-hour corporate town hall where a CTO explains a complex architectural diagram. A keyword search for "architecture" might return the entire video, leaving a developer to manually scrub through hours of footage. This is not just an inconvenience; it's a massive drain on productivity, a barrier to insight, and a significant source of missed opportunities. The inability to pinpoint specific moments, concepts, and actions within video content represents a critical bottleneck for nearly every industry.

The solution requires a paradigm shift from lexical matching to conceptual understanding. This is the domain of semantic video search, a transformative approach that leverages artificial intelligence to interpret the meaning and context within video content. Instead of searching for simple text strings, this technology allows users to search for ideas, objects, spoken phrases, and even abstract concepts. It enables you to ask your video library, "Show me every instance where our product logo appears next to a competitor's," or "Find the moment in our user interviews where a customer expressed frustration." This is the key to unlocking the true potential of the world's fastest-growing data source.

The High Cost of Context Blindness

The limitations of traditional video search extend far beyond simple user frustration, creating tangible operational and financial burdens for organizations. Relying on manual tagging and keyword-based systems introduces significant inefficiencies and risks that compound at scale. These systems are not just outdated; they are fundamentally ill-equipped for the complexity and volume of modern video, leading to a series of critical pain points that directly impact the bottom line and stifle innovation. Each challenge underscores the urgent need for a more intelligent, context-aware approach to video analysis.

First, the dependency on manual review and tagging is a severe operational drag. Internal analysis shows that semantic video analysis can reduce manual video review time by up to 70%. Without this automation, teams spend countless hours watching, logging, and tagging content-a process that is not only mind-numbingly tedious but also expensive, slow, and prone to human error. For a media company managing thousands of hours of new footage daily, this manual effort translates into delayed content delivery, increased labor costs, and a slow, reactive workflow that cannot keep pace with audience demand. The opportunity cost is immense, as skilled professionals are relegated to low-value tasks instead of creative or analytical work.

Second, keyword-based search suffers from inherent ambiguity and a lack of contextual awareness. As highlighted by Google's own research, semantic search significantly enhances accuracy by understanding user intent. A query for "shot on the green" could refer to a golf course, a film set's green screen, or a specific color. A keyword search engine has no way to disambiguate this without additional context, leading to a high volume of irrelevant results. This forces users to sift through noise, rephrase their queries multiple times, and often abandon their search altogether. For an e-learning platform, this means a student can't find the exact moment a professor explains a difficult concept, diminishing the platform's value.

Third, and perhaps most critically, traditional methods fail to capture unspoken or purely visual information. You cannot find a "tense negotiation" or a "successful product demonstration" if those exact words are not present in the title, description, or spoken dialogue. This vast layer of implicit information-body language, on-screen action, visual sentiment-is completely invisible to keyword-based systems. A compliance team reviewing security footage for suspicious behavior, for example, cannot search for "person acting nervously." They are forced back to manual, linear review, a process that is both inefficient and unreliable for detecting subtle but critical events.

Deconstructing Video: The Technology Behind Semantic Search

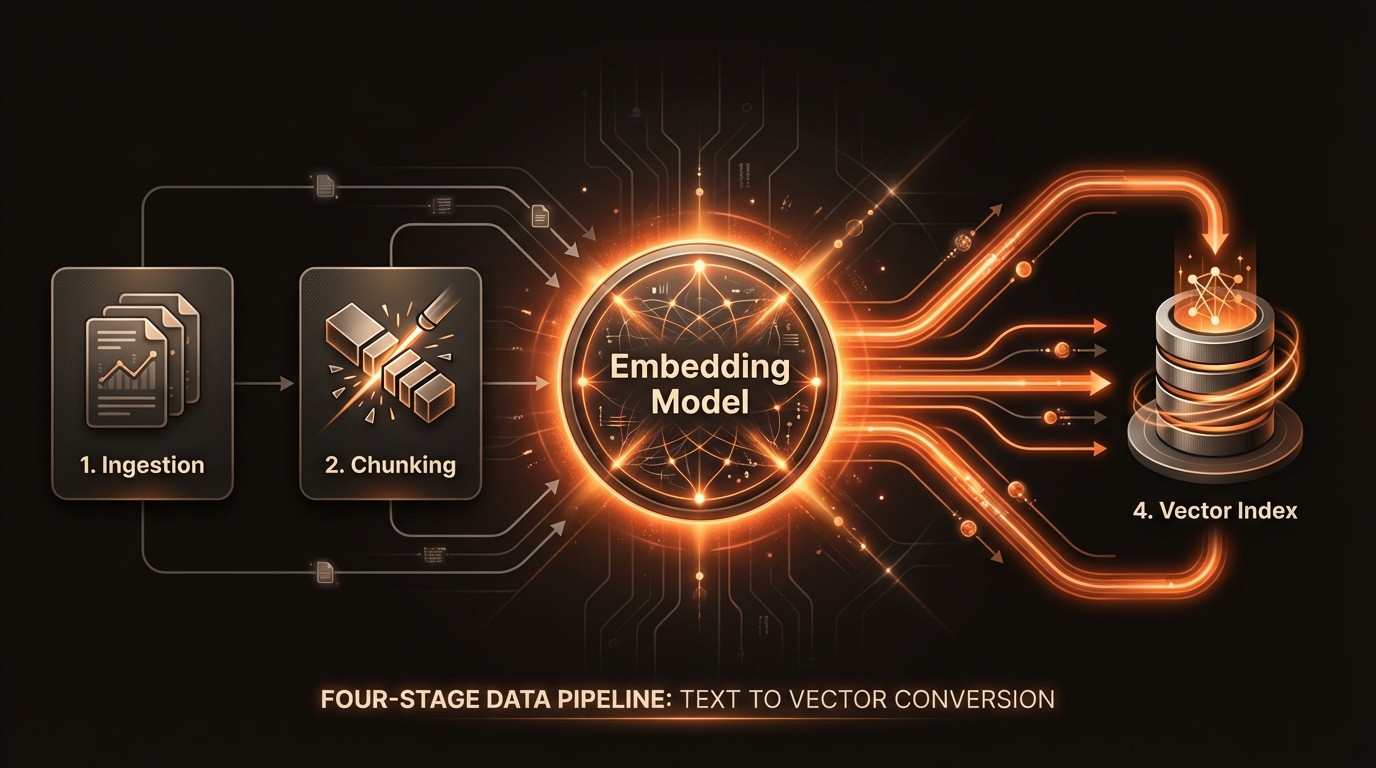

To move from matching words to understanding meaning, semantic search relies on a sophisticated pipeline of AI and data management technologies. This process transforms unstructured video data-a continuous stream of pixels and audio waves-into a structured, queryable format. It's a multi-layered approach that mimics human cognition, analyzing visual, auditory, and textual cues in concert to build a comprehensive understanding of the content. This foundational process is what enables a system like VideoDB to deliver precise, context-aware results from complex natural language queries.

From Pixels to Embeddings

The first step in understanding video is converting its raw data into a format that machine learning models can process. This is achieved by generating embeddings-dense numerical vector representations of the content. For video, this is a multimodal task. Computer vision models, such as Convolutional Neural Networks (CNNs) or Vision Transformers (ViTs), analyze individual frames or short clips to identify objects, scenes, and actions, encoding this visual information into a vector. Simultaneously, audio tracks are processed by speech-to-text models to generate transcripts, and these transcripts are then fed into Natural Language Processing (NLP) models like BERT to create text embeddings that capture semantic meaning. The result is a set of vectors for each segment of the video, representing what was seen and what was said.

The Vector Database: An Index of Meaning

Once these embeddings are generated, they need to be stored and indexed for efficient retrieval. This is the role of a specialized vector database. Unlike a traditional relational database that queries exact matches on structured data (like text or numbers), a vector database is optimized for similarity search. When a user submits a query-for example, "a person hiking in the mountains at sunset"-the query itself is converted into an embedding using the same AI models. The vector database then uses algorithms like Approximate Nearest Neighbor (ANN) to rapidly find the vectors in its index that are closest in multi-dimensional space to the query vector. This "closeness" corresponds to semantic similarity, allowing the system to find relevant clips even if they don't contain the exact keywords.

Multimodal Fusion for Holistic Understanding

The most advanced semantic search systems achieve superior accuracy through multimodal fusion. This technique involves combining the embeddings from different data streams-visuals, audio transcripts, and on-screen text recognized via Optical Character Recognition (OCR)-into a single, more powerful representation. By considering all these signals together, the system can build a much richer and more robust understanding of the content. For instance, the visual of a person speaking at a podium, combined with the transcribed audio of a financial report and the on-screen text of a slide showing "Q3 Earnings," allows the system to confidently identify the clip as a "quarterly earnings presentation." This holistic approach, managed within platforms like VideoDB, is what elevates search from a simple lookup to a true comprehension engine.

By the Numbers: The Impact of AI-Powered Video Analysis

Here's what the data reveals about the challenges and opportunities in managing and searching video content at scale:

Metric | Key Statistic | Implication for Businesses |

|---|---|---|

Global Internet Traffic | Video will account for over 80% of all internet traffic by 2025. | The volume of video data is exploding, making efficient search and analysis a critical necessity. |

Manual Review Inefficiency | Semantic analysis can cut manual video review time by up to 70%. | Automation frees up thousands of hours, reducing operational costs and accelerating workflows. |

Search Relevance | Semantic search improves accuracy by understanding user intent. | Higher-quality results lead to better user engagement and faster access to information. |

Customer Satisfaction | AI-powered search can lead to a 25% improvement in satisfaction. | An intuitive and effective search experience is a key driver of user retention and loyalty. |

Market Growth | The AI video analysis market is set to reach $25.9 billion by 2027. | The industry is rapidly adopting these technologies, creating a competitive advantage for early movers. |

Architecting an Intelligent Video Search Pipeline

Implementing a robust semantic search solution involves more than just deploying a single model; it requires architecting an end-to-end data pipeline that can ingest, process, index, and serve queries efficiently. This pipeline transforms raw video files into a rich, searchable knowledge base. By breaking down the process into distinct capability areas, engineering teams can build a scalable and powerful system that delivers tangible value, leveraging platforms like VideoDB to accelerate development and manage complexity.

Automated Multimodal Ingestion and Indexing

The foundation of any semantic search system is its ability to automatically extract and index information from multiple modalities. This ingestion pipeline is the workhorse of the system. As a video is uploaded, it's first broken down into manageable segments. Each segment then passes through a series of parallel AI models: a speech-to-text model transcribes the audio, an object detection model identifies items and their locations in each frame, an OCR model extracts any on-screen text, and a scene detection model identifies shot changes. The outputs from these models, along with the raw visual and audio data, are converted into vector embeddings. This process creates a dense, time-coded index where every second of the video is described by a rich set of semantic vectors. This automated approach eliminates the 70% time cost associated with manual logging.

Natural Language Query and Contextual Understanding

With a rich index in place, the next critical capability is the search interface itself. This layer must be able to translate a user's natural language query into a vector representation that can be used to search the index. When a user asks, "Show me our CEO's comments on international expansion from the last all-hands meeting," the system doesn't just look for those keywords. It uses a powerful language model to understand the entities ("CEO," "all-hands meeting"), the concepts ("international expansion"), and the intent. This query is converted into a multimodal search vector that looks for a combination of the CEO's face (from facial recognition), the relevant spoken phrases (from the text embeddings), and the context of the specific meeting. This leads to the highly relevant results that Gartner connects to a 25% boost in customer satisfaction.

Precise Moment Retrieval and Summarization

The final piece of the puzzle is delivering the results in a useful format. Instead of just returning a link to a 90-minute video, a true semantic search system performs precise moment retrieval. It returns the exact start and end timestamps of the relevant clips, allowing the user to jump directly to the information they need. For example, a search for "discussion about Q4 budget approval" in a two-hour meeting recording should return a 45-second clip from 1:12:30 to 1:13:15. Advanced systems can take this a step further by providing AI-generated summaries of the retrieved clips or even stitching multiple relevant moments together into a concise highlight reel. This capability is what truly unlocks productivity, turning vast video archives from passive storage into active, accessible knowledge repositories.

Semantic Search in Action: Industry Use Cases

The theoretical power of semantic video search becomes concrete when applied to real-world industry challenges. Across various sectors, organizations are leveraging this technology to transform workflows, enhance customer experiences, and uncover insights that were previously locked away in unstructured video data. These applications demonstrate a clear return on investment by saving time, improving accuracy, and enabling entirely new capabilities.

Media and Entertainment: Accelerating Content Discovery

In the media industry, production teams, journalists, and archivists constantly need to find specific footage within massive libraries. A documentary filmmaker might need to find every historical clip of a specific landmark from a certain decade, or a sports broadcaster might need to assemble a highlight reel of every goal scored by a particular player. Manually scrubbing through terabytes of footage is unfeasible. With semantic search, a producer can simply query, "Find all wide shots of the Eiffel Tower at night from the 1980s" or "Show me every successful three-point shot by Stephen Curry in the final two minutes of a game." The system can instantly retrieve these moments, reducing content discovery time from days to minutes and dramatically accelerating the creative process.

Corporate Learning and Knowledge Management: Unlocking Institutional Memory

Companies record countless hours of internal meetings, training sessions, webinars, and town halls. This content represents a vast repository of institutional knowledge, but it's rarely accessible after the fact. An employee trying to understand a past decision or a new hire needing to get up to speed on a project is left with little recourse. Semantic search turns this archive into a living knowledge base. A sales representative can search training videos for "best practices on handling pricing objections," and the system will point them to the exact 3-minute segment from an expert's presentation. This on-demand access to information improves employee onboarding, preserves knowledge, and ensures consistent messaging across the organization.

Public Safety and Smart Cities: Enhancing Situational Awareness

For law enforcement and public safety agencies, reviewing surveillance and body camera footage is a critical but time-consuming task. Manually reviewing hours of video to find a specific event or person of interest is a significant drain on resources. Semantic search provides a powerful investigative tool. An analyst could search city-wide camera feeds for "a red sedan running a stop sign near the main library between 2:00 PM and 3:00 PM" or "all individuals wearing a blue backpack in the transit station." This ability to rapidly filter and find relevant events across thousands of hours of footage enhances situational awareness, accelerates investigations, and improves overall public safety outcomes by allowing officers to focus on analysis rather than manual review.

Expert Insights on Visual AI

Industry leaders in artificial intelligence have consistently pointed toward the importance of understanding unstructured data as the next frontier. Andrew Ng, Founder of Landing AI, emphasized in Stanford Engineering that AI's ability to understand and analyze unstructured data like video is crucial for unlocking new levels of automation and efficiency across industries (2023). This perspective underscores the shift from data processing to data comprehension, where the value lies not in storing video but in interpreting its contents at scale.

Fei-Fei Li, a Professor at Stanford University and Co-Director of Stanford HAI, has highlighted the importance of developing AI systems with 'Spatial Intelligence'—the ability to understand the visual world in a human-like manner. Her foundational work (Nature, 2020) demonstrated this in healthcare environments, but her broader vision points to a future where semantic video search is as natural as a conversation.

Your Roadmap to Implementing Semantic Video Search

Adopting semantic video search is a strategic initiative that can deliver a significant competitive advantage. However, a successful implementation requires careful planning and a phased approach. By following a structured roadmap, you can de-risk the project, ensure alignment with business goals, and build a solution that scales effectively. This journey begins with defining the problem and ends with an iterative process of refinement and improvement.

Define Your Use Case and Success Metrics: Before writing a single line of code, clearly identify the primary business problem you aim to solve. Is it reducing content discovery time for your media team? Improving the user experience on your e-learning platform? Or enabling faster compliance reviews? Once the use case is defined, establish clear, quantifiable success metrics. This could be a target to reduce average search time by 50%, increase user engagement with video content by 20%, or decrease manual review hours by a specific amount. These metrics will guide your development and demonstrate ROI.

Data Audit and Preparation: Evaluate your existing video library. Assess the quality, formats, and existing metadata of your content. A successful semantic search system depends on clean, well-organized data. Develop a data ingestion strategy to handle new video content and a plan to process your existing archive. This stage involves setting up the necessary storage and defining the preprocessing steps, such as standardization of video formats and extraction of any existing metadata, to prepare the content for the analysis pipeline.

Select Your Technology Stack: The core of your solution will be the AI models and the underlying database. You'll need to decide whether to use pre-trained, off-the-shelf models for tasks like object detection and transcription or to fine-tune custom models on your specific domain data for higher accuracy. Critically, you must choose a vector database designed for high-throughput similarity search. A managed platform like VideoDB can abstract away much of this complexity, providing an integrated solution for video ingestion, embedding, indexing, and retrieval.

Build and Test the Indexing Pipeline: This is the primary engineering lift. Construct the automated pipeline that takes a video file as input and outputs a rich, searchable index in your vector database. Start with a core set of features, such as speech-to-text and basic object detection. Test the pipeline on a representative subset of your video library, validating that the metadata and embeddings are being generated correctly and that the index is being populated as expected. This is an iterative process of tuning models and optimizing data flow.

Develop the Search Interface and Iterate: With the backend pipeline in place, build a user-facing application that allows users to perform searches. Start with a simple interface and gather feedback from a pilot group of users. Use their feedback and search analytics to understand what's working and where the system is failing. Continuously iterate by refining your AI models, adding new features (like summarization or visual search), and improving the user experience. A successful semantic search system is not a one-time project but a continuously evolving product.

Frequently Asked Questions About Semantic Video Search

Q: What is the main difference between semantic search and keyword search for video?

A: The core difference is understanding versus matching. Keyword search finds videos containing exact text strings in their title or manual tags. Semantic video search understands the user's intent and the context of the video's content, allowing it to find relevant moments based on concepts, objects, and spoken phrases, even if the exact keywords are never used. It searches for meaning, not just words.

Q: How does semantic search handle different languages or accents in video audio?

A: Modern speech-to-text models are trained on vast, diverse datasets, enabling them to accurately transcribe audio from a wide variety of languages, dialects, and accents. For a semantic search system, this means it can create an accurate text-based index from global video content. Furthermore, multilingual language models can often understand queries in one language and find results in another, breaking down language barriers in content discovery.

Q: Is this technology expensive to implement? What are the main cost drivers?

A: Implementation costs can vary significantly based on the approach. The main drivers are GPU compute resources for running AI models during the indexing process, data storage costs for the videos and the vector index, and engineering effort. Using a managed platform like VideoDB can often reduce the total cost of ownership by handling the infrastructure, scaling, and complex model integrations, shifting the cost from a large upfront capital expenditure to a more predictable operational expense.

Q: Can semantic search understand abstract concepts like "a happy moment" or "a tense negotiation"?

A: Yes, this is one of its most powerful capabilities. By training on vast amounts of labeled data, advanced models can learn to recognize the visual and auditory cues associated with abstract concepts. For example, a model can learn that smiles, laughter, and upbeat music often correlate with a "happy moment," while crossed arms, stern facial expressions, and a low tone of voice can indicate a "tense negotiation." This allows for searching beyond concrete objects and text.

Q: How does a platform like VideoDB simplify the implementation process?

A: VideoDB simplifies implementation by providing an integrated, end-to-end solution specifically for video understanding. It abstracts away the complexity of setting up and managing a multimodal AI pipeline, a vector database, and scalable infrastructure. Instead of building these components from scratch, developers can use a simple API to upload videos, have them automatically indexed, and perform complex semantic searches, reducing development time from months to weeks.

Key Takeaways

Beyond Keywords: Semantic search moves beyond simple text matching to understand the intent behind a query and the context within video content.

Unlocking Value: The majority of video data is unstructured and unsearchable; semantic analysis unlocks this value by making it discoverable.

Efficiency Gains: Automating video analysis can reduce manual review time by up to 70%, freeing up resources and accelerating workflows.

Multimodal is Key: The most accurate systems combine insights from visuals, audio, and text to build a holistic understanding of video.

Enhanced Experience: Providing a powerful, intuitive search experience is a proven way to increase user satisfaction by as much as 25%.