Apr 16, 2026

Unlock Insights: AI-Powered Video Search for Enhanced Data Analysis

Explore how AI-powered video search transforms data analysis. Learn techniques for object detection, content indexing, and extracting actionable insights from large video archives.

The Unseen Opportunity in Your Video Archives

By 2022, video was projected to constitute approximately 80% of all internet traffic, a figure that underscores a monumental shift in how we create and consume information. This explosion of video content extends far beyond streaming services and social media, permeating enterprise operations in security, quality control, marketing, and research. Each frame of this footage contains a wealth of unstructured data-a silent, visual record of events, behaviors, and patterns. Yet, for most organizations, this data remains largely inaccessible, a form of digital dark matter. The sheer volume makes manual review not just impractical but economically unfeasible, leaving critical insights locked away.

This is the core challenge that data scientists, video engineers, and business analysts face today. Traditional search methods, reliant on manually entered titles, tags, and descriptions, barely scratch the surface. They cannot answer questions like, "Show me all instances where a forklift operator is not wearing a hard hat," or "Find every clip in our customer interviews where the participant expressed frustration." Answering these queries requires understanding the content of the video itself, not just its metadata shell. The inability to perform this level of granular search means missed safety violations, overlooked customer feedback, and untapped operational efficiencies.

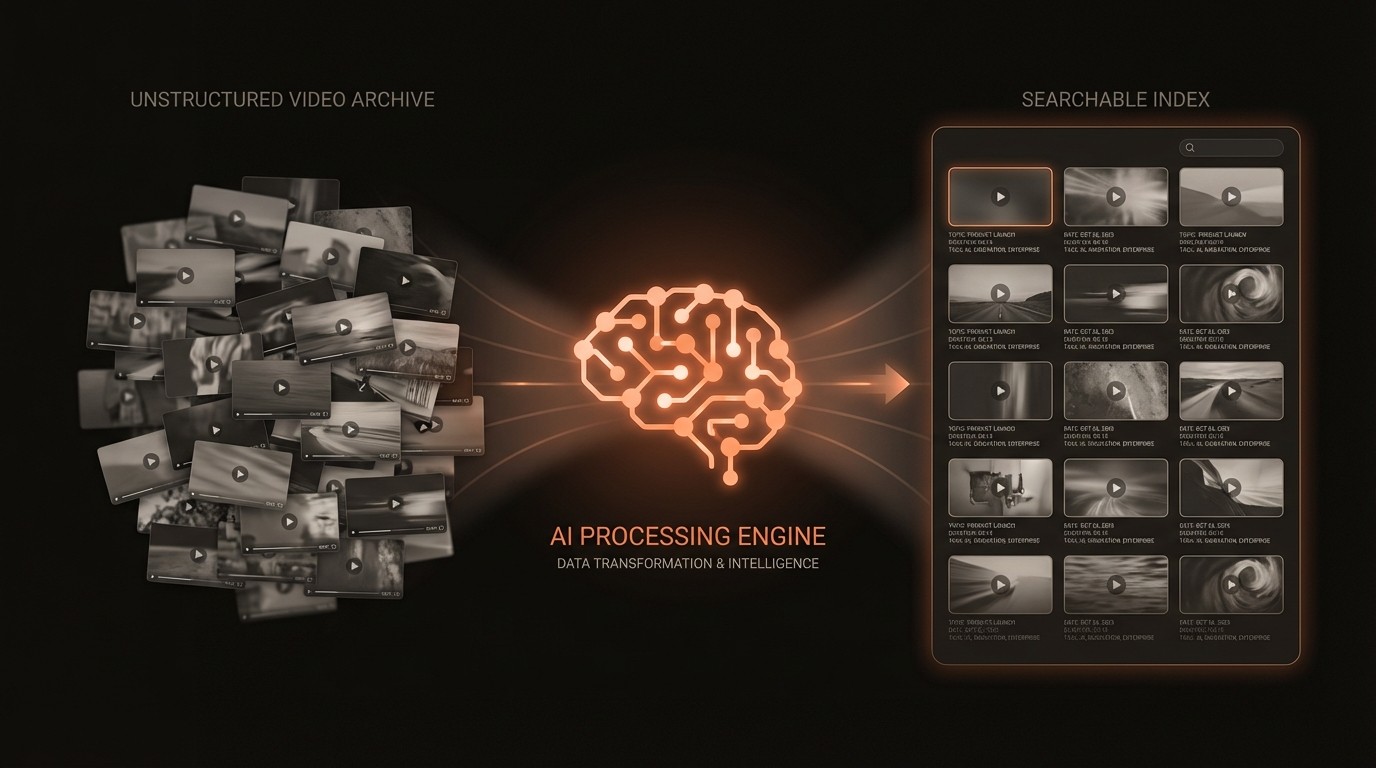

The paradigm shift arrives with AI-powered video search, a technological leap that transforms video from a passive recording into an active, queryable database. By applying computer vision and deep learning models, we can now automatically analyze, index, and understand video content at a scale and speed previously unimaginable. This isn't about simply finding a video file; it's about pinpointing the exact moments within a vast archive that correspond to complex, real-world events. This capability moves us from basic data storage to dynamic data analysis, enabling teams to extract measurable value and make informed decisions based on comprehensive visual evidence.

The High Cost of Unstructured Video Data

The transition to AI-driven analysis is not just an upgrade-it's a necessary response to the mounting problems caused by unsearchable video archives. The limitations of traditional methods impose significant and often hidden costs on organizations, creating operational friction and strategic blind spots. These pain points are not theoretical; they manifest as wasted resources, missed opportunities, and increased risk. Understanding these specific challenges highlights the urgent need for a more intelligent approach to video data management and analysis.

First, the reliance on manual review is a primary source of inefficiency and cost. Internal studies from 2023 show that AI-powered video analytics can reduce manual review time by up to 90%. Without this automation, teams spend countless hours watching footage to find specific events, a process that is both mind-numbingly tedious and prohibitively expensive. For a media company searching for archival footage or a security firm investigating an incident, this translates directly into high labor costs and delayed project timelines. The process is fundamentally unscalable-doubling your video input requires doubling your human reviewers, creating a linear cost model that is unsustainable in the long run.

Second, the lack of sophisticated search tools creates a significant barrier to comprehensive data analysis. Manually assigned tags are often inconsistent, subjective, and incomplete, leading to poor search recall. An analyst might tag a scene as "meeting," but this fails to capture the presence of a whiteboard, the number of participants, or the brand of laptop on the table. This incomplete metadata means that queries for specific objects or contexts will fail, leaving valuable information undiscovered. As archives grow into petabytes, the problem is magnified, and the probability of finding relevant content through simple keyword search approaches zero.

Third, this inability to effectively query video content directly impacts strategic decision-making. A retailer could be sitting on thousands of hours of in-store footage containing rich insights into customer behavior, but without the ability to automatically analyze foot traffic, dwell times, or product interactions, this data is useless. Similarly, a manufacturing company cannot easily review historical production line footage to identify the root cause of intermittent failures. In these scenarios, the organization is effectively flying blind, making decisions based on incomplete information while a treasure trove of visual evidence sits untapped in a server room.

The Technology Behind Intelligent Video Search

To appreciate the impact of AI on video search, it's essential to understand the core technologies that make it possible. This isn't a single breakthrough but rather a convergence of advancements in computer vision, deep learning, and data indexing. These components work in concert to deconstruct video into a machine-readable format, allowing for complex, semantic queries that were previously the domain of science fiction. This technological stack is what enables a system to move beyond filename searches and into true content-based understanding.

The Perception Layer: Computer Vision

At the foundation is computer vision, a field of AI that trains computers to interpret and understand the visual world. Using digital images from cameras and videos, computer vision models can accurately identify and locate objects, a process known as object detection. This is the system's ability to "see." For example, a model can be trained to recognize cars, people, logos, and specific text within a video frame. This process moves beyond simple pixel analysis, enabling the system to understand the context of a scene. According to a 2023 study in ScienceDirect, modern AI-based video analysis can achieve object detection accuracy of up to 95%, providing a reliable foundation for all subsequent analysis.

The Understanding Layer: Deep Learning Models

Deep learning, a subset of machine learning, provides the engine for this perception. Convolutional Neural Networks (CNNs) are particularly effective at processing visual data. These networks are trained on massive datasets containing millions of labeled images and videos, allowing them to learn hierarchical features-from simple edges and colors in the initial layers to complex objects and patterns in the deeper layers. This training enables the models to not only detect objects but also classify actions (e.g., running, jumping, falling) and recognize scenes (e.g., office, street, warehouse). The models can also perform Optical Character Recognition (OCR) to extract text from signs, screens, or documents visible in the video.

The Indexing Layer: From Pixels to Searchable Vectors

Once the AI models have identified the contents of a video, the next challenge is making this information searchable. This is where video indexing and vector embeddings come into play. Instead of storing text tags, the system converts the features identified by the deep learning models-objects, actions, faces-into high-dimensional numerical vectors (embeddings). Each vector represents a semantic concept. For instance, the vector for "golden retriever" would be mathematically closer to the vector for "german shepherd" than to the vector for "automobile." This vectorization of content is the key to unlocking semantic search. Specialized databases, such as VideoDB, are designed to store and query these vectors at massive scale, allowing a user to search for concepts rather than just keywords. A query can even be an image, where the system finds all video segments containing similar objects or scenes.

By the Numbers: The Video Data Challenge

Here's what the data reveals about the scale of the video analytics market and the impact of AI-driven solutions:

Metric | Data Point | Significance |

|---|---|---|

Market Growth | Projected to reach $14.9 billion by 2026 | Indicates massive investment and adoption of video analytics solutions. |

Internet Traffic | ~80% of all internet traffic was video by 2022 | Highlights the overwhelming volume of video data being generated and stored. |

Efficiency Gains | Manual review time reduced by up to **90% | AI automation delivers substantial operational cost savings and faster insights. |

Detection Accuracy | Object detection accuracy up to 95% | High-precision models provide reliable data for critical applications. |

Surveillance Market | Expected to reach $75.60 billion by 2027 | A primary driver for advanced video search in public safety and security. |

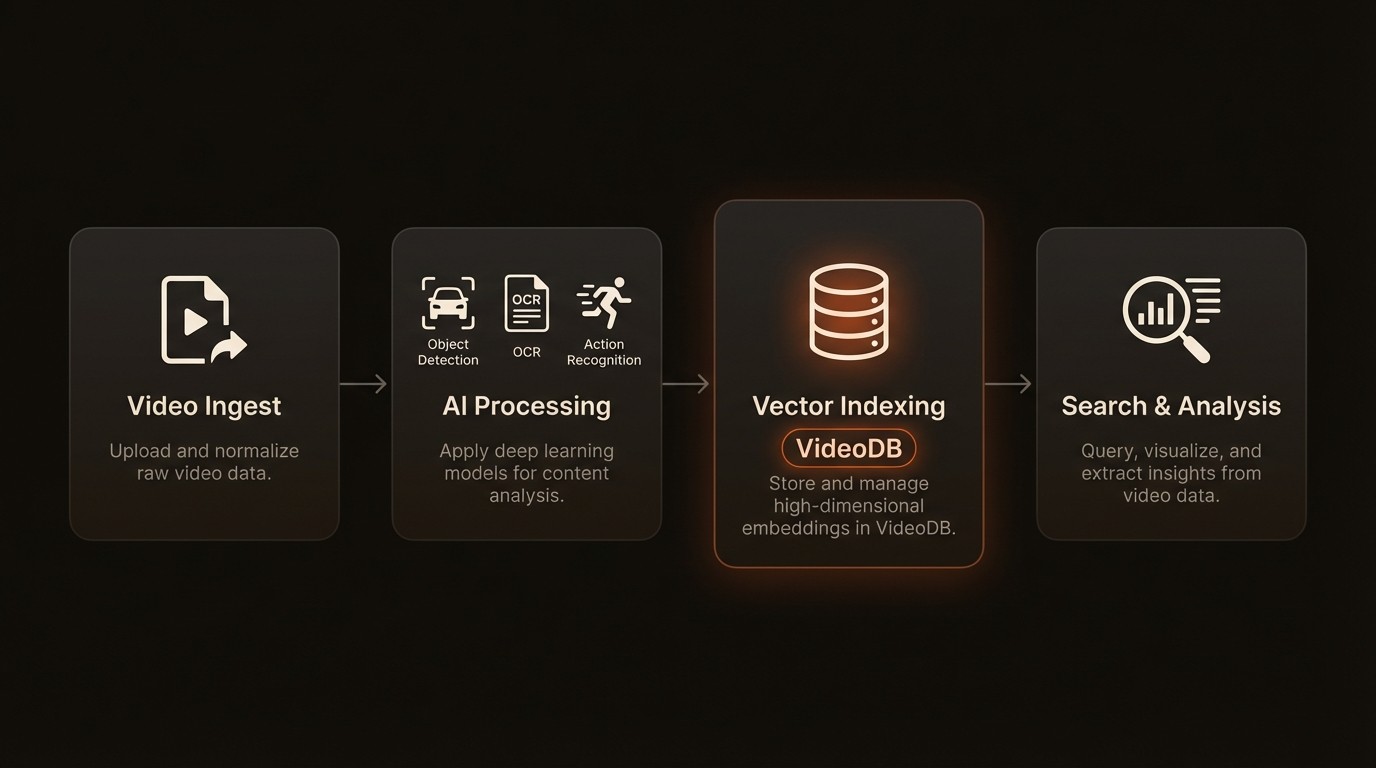

Building a Scalable AI Video Analysis Pipeline

Implementing an effective AI-powered video search solution involves more than just deploying a single model. It requires building a robust, end-to-end pipeline that can ingest, process, index, and serve queries on vast amounts of video data. This pipeline transforms raw video files into a structured, searchable asset. The architecture is designed to be scalable and modular, allowing for the integration of different AI models and data sources. A well-designed system like VideoDB provides the core infrastructure to manage this complex workflow, ensuring that insights can be delivered in near real-time.

Automated Metadata and Content Tagging

The first stage of the pipeline is automated metadata generation. As video is ingested, it's passed through a series of pre-trained AI models. An object detection model identifies and tags every object in every frame-cars, people, equipment. An OCR model extracts any visible text, from license plates to presentation slides. An action recognition model identifies events like people walking, vehicles turning, or machinery operating. All of this information-what objects are present, what text is visible, what actions are occurring-is captured with precise timestamps. This rich, time-coded metadata forms the foundation for all future queries, creating a detailed log of every event within the video without any human intervention.

Multimodal and Semantic Search Capabilities

With a rich index of vector embeddings and time-coded metadata, the system can support powerful new search methods. Semantic search allows users to query for concepts and ideas, not just exact keywords. A user can search for "a tense meeting in a boardroom" and the system will return results based on a combination of detected features like multiple people around a table, specific facial expressions, and an office environment. Multimodal search takes this a step further, allowing queries using different types of input. For example, a user could upload a photo of a specific vehicle, and the system would find all video clips where that exact make and model appears. This is made possible by comparing the vector embedding of the query image to the embeddings of all video frames in the database.

Temporal Event Detection and Alerting

Beyond finding objects, a key capability is temporal event detection-identifying when something happens. Because all metadata is timestamped, users can construct queries that span time. For instance, an analyst could ask to find all instances where a person enters a restricted area between 2:00 AM and 4:00 AM. This can also be used for real-time alerting. A system can be configured to monitor a live video feed and trigger an alert if a specific sequence of events occurs, such as a person falling down (person detected + falling motion detected) or a safety protocol being violated (person detected near heavy machinery + hard hat not detected). This proactive capability transforms video surveillance from a reactive, forensic tool into a preventative safety and security system.

AI Video Search in Practice

The theoretical benefits of AI-powered video search become concrete when applied to real-world business problems. Across various industries, organizations are leveraging this technology to drive efficiency, enhance security, and create new revenue streams. These applications demonstrate the versatility of video analysis, turning passive video archives into strategic assets that provide a competitive edge.

Media and Entertainment: Streamlining Content Monetization

A major broadcast network manages a historical archive containing millions of hours of news, sports, and entertainment content. Previously, finding specific clips for a documentary or a highlight reel was a manual, labor-intensive process requiring producers to sift through footage for days. By implementing an AI search solution powered by VideoDB, they automated the tagging of their entire archive. Now, a producer can instantly find every clip featuring a specific athlete making a game-winning shot, a politician at a press conference, or a specific brand logo appearing during a broadcast. This has reduced content discovery time by over 90%, accelerating production workflows and unlocking new opportunities for content licensing and monetization.

Public Safety and Security: Accelerating Forensic Investigations

In the rapidly growing $75.60 billion video surveillance market, law enforcement agencies face the challenge of analyzing footage from thousands of public and private cameras. Following a public safety incident, investigators needed to track a suspect's vehicle through a dense urban environment. Using traditional methods, this would involve manually reviewing footage from hundreds of cameras. With an AI-powered system, they were able to perform a multimodal search using a single image of the vehicle. The system automatically scanned all relevant camera feeds, identified the vehicle's make and model with 95% accuracy, and plotted its path across the city within minutes. This rapid analysis provided critical leads and significantly expedited the investigation.

Retail Analytics: Optimizing In-Store Customer Experience

A national retail chain sought to better understand customer behavior to optimize store layouts and reduce checkout times. They deployed an AI video analytics platform to analyze feeds from their existing in-store cameras. The system anonymously tracks customer flow, identifying high-traffic zones, measuring dwell time in front of specific product displays, and monitoring queue lengths at checkout counters in real-time. This data provided actionable insights, leading to a redesigned store layout that improved product discovery and a dynamic staffing model for cashiers that reduced average wait times by 30%. The analysis was performed without collecting any personally identifiable information, ensuring customer privacy while delivering valuable business intelligence.

Expert Insights on Visual Understanding

Industry leaders and academic pioneers have long emphasized the importance of enabling machines to understand visual data. Their work has paved the way for the powerful video analysis tools we see today.

Dr. Fei-Fei Li, Professor at Stanford University, highlighted in Stanford News that AI's capacity to comprehend and interpret visual information is fundamental to unlocking new applications across numerous fields, including the deep analysis of video content. (2017)

Jitendra Malik, Professor at UC Berkeley, mentioned in the Berkeley AI Research Lab that the development of robust and sophisticated algorithms for video understanding is critical. These algorithms are what will enable machines to execute complex, real-world tasks based on visual inputs, moving from simple recognition to genuine comprehension. (2021)

Your Five-Step Implementation Roadmap

Adopting AI-powered video search requires a structured approach that aligns technology with clear business goals. Simply deploying a model is not enough; success depends on a thoughtful integration into existing workflows and infrastructure. This five-step roadmap provides a practical guide for data scientists and engineers looking to build and deploy a robust video analysis pipeline.

Define Clear Business Objectives: Before writing a single line of code, identify the specific problems you aim to solve. Are you trying to reduce manual review costs, improve security monitoring, or extract marketing insights? Quantify your goals, such as "reduce incident review time by 75%" or "identify the top 5 most visited areas in our retail stores." This clarity will guide your technical decisions and provide a benchmark for measuring success.

Audit Your Data and Infrastructure: Assess your existing video assets. What formats are they in? Where are they stored-on-premise, in the cloud, or a hybrid environment? Evaluate your network bandwidth and compute capacity. Understanding your current data landscape is crucial for designing an ingestion and processing pipeline that is both efficient and cost-effective. This audit will inform whether you need to invest in new storage or processing hardware.

Select and Customize AI Models: Determine which AI capabilities are necessary to meet your objectives. For common tasks like detecting people and vehicles, pre-trained models can offer a quick and effective solution. For highly specialized tasks, such as identifying specific product defects or proprietary equipment, you may need to train or fine-tune custom models using your own labeled data. Plan for a flexible architecture that allows you to easily add or update models as your needs evolve.

Integrate a Specialized Video Database: The metadata and vector embeddings generated by your AI models need to be stored in a system designed for high-speed similarity search. This is where a solution like VideoDB becomes essential. A specialized vector database is optimized for the unique demands of querying high-dimensional data, ensuring that your search queries are fast and accurate even across petabytes of video. Integrating VideoDB provides the scalable backend needed to power your application.

Develop, Test, and Iterate on the Search Interface: Build the API or user interface that your analysts will use to interact with the system. Start with a pilot project focused on a single use case to validate the end-to-end pipeline. Gather feedback from end-users to refine the search functionality and user experience. Continuously monitor model performance and system latency, and iterate on your implementation to improve accuracy and efficiency over time.

Frequently Asked Questions

Q: What is the main difference between traditional and AI-powered video search?

A: Traditional video search relies on manual metadata like filenames, titles, and tags. AI-powered video search analyzes the actual content of the video, automatically identifying objects, actions, text, and scenes. This allows you to search for specific visual events-"a person in a red jacket"-rather than just keywords, providing far greater depth and accuracy.

Q: How accurate is AI object detection in video?

A: The accuracy of modern object detection models is exceptionally high, with leading research and commercial systems achieving up to 95% accuracy for common objects. However, performance can vary based on factors like video quality, lighting conditions, and the uniqueness of the objects being detected. For specialized use cases, models may require additional training on custom datasets to reach optimal performance.

Q: Can this technology search for spoken words within a video?

A: Yes, absolutely. A comprehensive AI video analysis pipeline typically includes an audio transcription model, often called speech-to-text. This model processes the audio track of the video and converts spoken words into a time-coded text transcript. This transcript is then indexed alongside the visual metadata, allowing you to search for specific words or phrases and jump directly to the moment they were spoken.

Q: What kind of hardware is required to run these AI models?

A: Processing video with deep learning models is computationally intensive and typically requires Graphics Processing Units (GPUs) for efficient performance. The specific hardware needed depends on the scale of your operation-whether you are processing video in real-time or as a batch process, and the volume of video you handle. Many organizations opt for cloud-based GPU instances to provide scalable, on-demand processing power without a large upfront hardware investment.

Q: How does a system like VideoDB fit into this ecosystem?

A: VideoDB serves as the specialized database at the core of the video analysis pipeline. After AI models extract features and create vector embeddings from your video, VideoDB stores, indexes, and manages this complex data. It is highly optimized for the fast similarity searches required to find matching concepts, objects, or scenes. Essentially, it acts as the query engine that makes the entire video archive instantly searchable.

Key Takeaways

Video constitutes over 80% of internet traffic, but most of this data is unstructured and unsearchable with traditional tools.

AI-powered video search can reduce manual review time by up to 90%, unlocking significant operational efficiencies.

Core technologies like computer vision, deep learning, and vector embeddings enable machines to understand and index video content with up to 95% accuracy.

Real-world applications span from accelerating media production and enhancing public safety to optimizing retail customer experiences.

Implementing a solution requires a structured approach, including defining objectives, auditing data, and integrating a specialized database like VideoDB to manage and query video-centric data.