Apr 17, 2026

Unveiling the Transformer: A Deep Dive into its Architecture

Explore the Transformer architecture, a cornerstone of AI, dissecting its components from attention mechanisms to feed-forward networks.

The Power of Transformers in AI

In 2017, the introduction of the Transformer architecture marked a pivotal moment in the field of artificial intelligence, particularly in natural language processing (NLP). This architecture has since achieved state-of-the-art results across various NLP tasks, revolutionizing how machines understand and generate human language. The core challenge that Transformers address is the ability to capture long-range dependencies in sequential data, a feat that traditional models struggled with. By leveraging the attention mechanism, Transformers weigh the importance of different parts of the input sequence, allowing for more nuanced and context-aware processing.

The significance of Transformers extends beyond NLP. Their versatility has been demonstrated in computer vision tasks, showcasing their adaptability across different domains. This adaptability is largely due to the architecture's reliance on self-attention, which enables the model to attend to different parts of the input sequence simultaneously. This capability is crucial for tasks that require understanding complex relationships within data, such as image recognition and language translation.

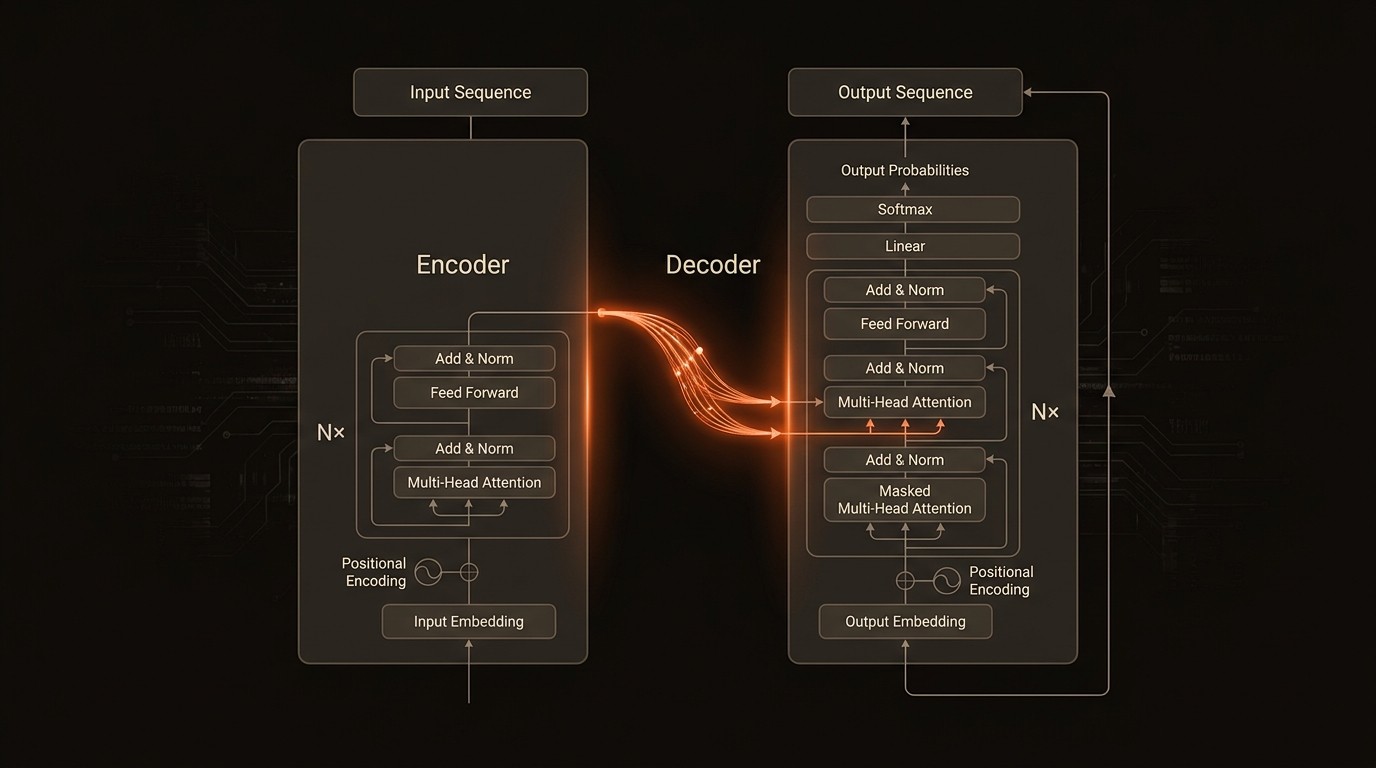

Despite their success, understanding the inner workings of Transformers can be daunting. The architecture consists of an encoder and a decoder, each with multiple layers, and relies heavily on self-attention to compute weighted representations of input sequences. This blog post aims to demystify the Transformer architecture, providing a comprehensive exploration of its components and functionalities. By dissecting key elements such as attention mechanisms and feed-forward networks, we hope to offer AI enthusiasts, developers, and researchers a deeper understanding of this groundbreaking technology.

Challenges in Understanding Transformer Architecture

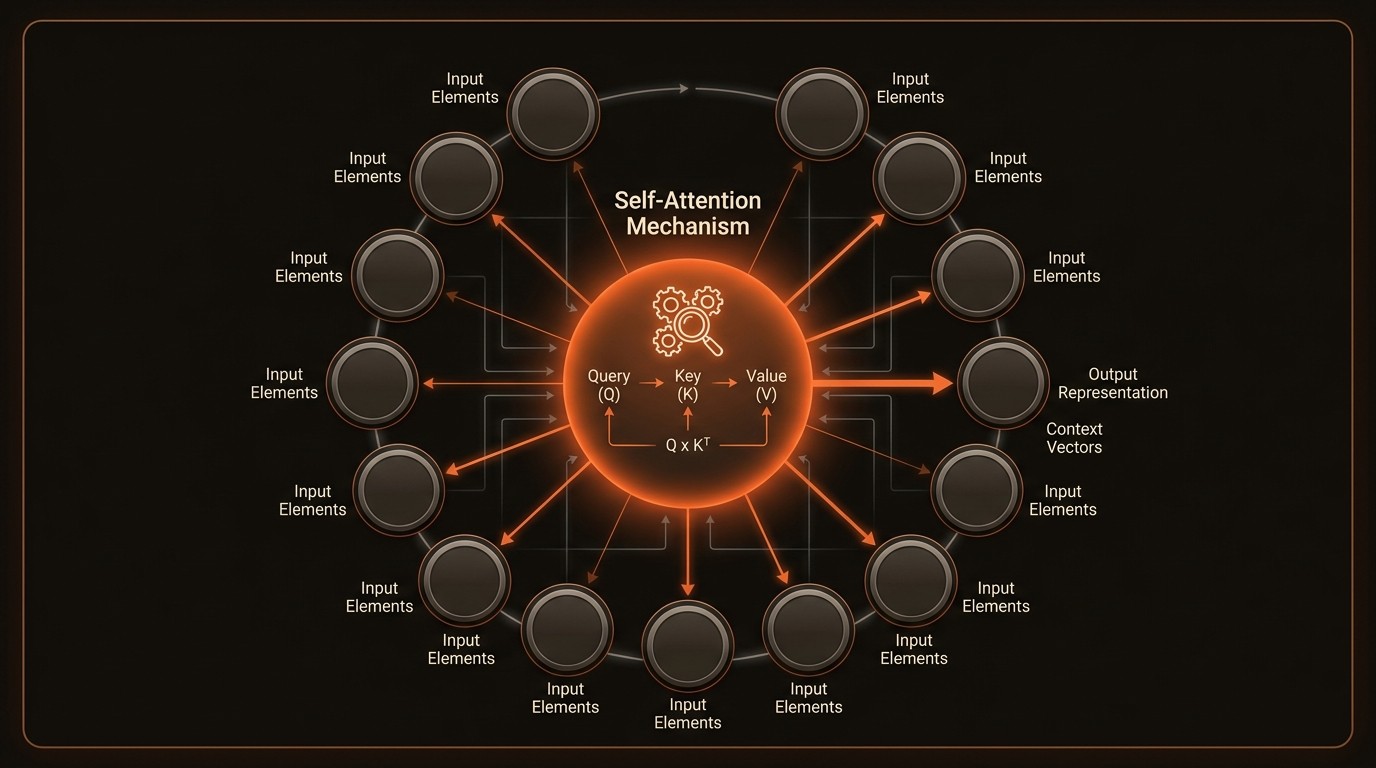

One of the primary challenges in understanding the Transformer architecture is its complexity. The architecture's reliance on self-attention mechanisms can be difficult to grasp, especially for those new to the field of deep learning. Self-attention allows the model to focus on different parts of the input sequence, computing a weighted representation that captures the importance of each element. This mechanism is crucial for capturing long-range dependencies, but its mathematical underpinnings can be intimidating for beginners.

Another pain point is the sheer volume of data required to train Transformer models effectively. These models are data-hungry, necessitating vast amounts of labeled data to achieve optimal performance. This requirement can be a significant barrier for smaller organizations or individual researchers who may not have access to large datasets. The consequence is a potential limitation in the diversity of applications and innovations that can be explored using Transformers.

The computational resources needed to train and deploy Transformer models also pose a challenge. These models are computationally intensive, requiring powerful hardware and significant energy consumption. This can lead to increased costs and environmental impact, raising concerns about the sustainability of deploying such models at scale. Organizations must weigh the benefits of using Transformers against these resource demands.

Finally, the interpretability of Transformer models remains a concern. While they excel at capturing complex patterns in data, understanding how they arrive at specific decisions can be challenging. This lack of transparency can be problematic in applications where explainability is crucial, such as healthcare or finance. Researchers are actively working on methods to improve the interpretability of these models, but it remains an ongoing challenge.

Understanding the Core Components of Transformers

To fully appreciate the capabilities of the Transformer architecture, it's essential to understand its core components and how they function together. This section will delve into the key concepts that underpin this revolutionary model.

Self-Attention Mechanism

The self-attention mechanism is at the heart of the Transformer architecture. It allows the model to weigh the importance of different parts of the input sequence, enabling it to focus on relevant information while ignoring less critical data. This mechanism is crucial for capturing long-range dependencies, as it enables the model to consider the entire input sequence when making predictions. By computing a weighted representation of the input, self-attention facilitates more accurate and context-aware processing.

Encoder-Decoder Structure

The original Transformer model consists of an encoder and a decoder, each with multiple layers. The encoder processes the input sequence, generating a set of attention-based representations. These representations are then passed to the decoder, which generates the output sequence. This structure is particularly effective for tasks such as language translation, where the model must convert an input sequence in one language to an output sequence in another.

Feed-Forward Networks

Within each layer of the encoder and decoder, the Transformer architecture incorporates feed-forward networks. These networks apply a series of linear transformations and non-linear activations to the attention-based representations, enhancing the model's ability to capture complex patterns in the data. The feed-forward networks operate independently on each position of the sequence, allowing for parallel processing and increased computational efficiency.

Positional Encoding

Transformers do not inherently understand the order of elements in a sequence, as they lack the recurrent structure of traditional models. To address this, positional encoding is used to inject information about the position of each element into the input representations. This encoding allows the model to differentiate between elements based on their position in the sequence, enabling it to capture the sequential nature of the data.

By the Numbers

Here's what the data reveals:

Metric | Current State | Impact |

|---|---|---|

State-of-the-art NLP results | Achieved by Transformers | Enhanced language understanding |

Attention Mechanism | Core to Transformers | Improved context awareness |

Long-range dependencies | Captured effectively | Better sequential data processing |

Encoder-Decoder Layers | Multiple layers used | Increased model complexity |

Versatility | Applied in vision tasks | Broadened application scope |

Exploring the Capabilities of Transformers

Attention Mechanisms

The attention mechanism is a cornerstone of the Transformer architecture, enabling the model to weigh the importance of different parts of the input sequence. This capability is crucial for tasks that require understanding complex relationships within data, such as language translation and image recognition. By computing a weighted representation of the input, the attention mechanism allows the model to focus on relevant information while ignoring less critical data. This results in more accurate and context-aware processing, enhancing the model's overall performance.

Encoder-Decoder Structure

The encoder-decoder structure of the Transformer model is particularly effective for tasks such as language translation. The encoder processes the input sequence, generating a set of attention-based representations. These representations are then passed to the decoder, which generates the output sequence. This structure allows the model to convert an input sequence in one language to an output sequence in another, facilitating accurate and efficient translation.

Feed-Forward Networks

Within each layer of the encoder and decoder, the Transformer architecture incorporates feed-forward networks. These networks apply a series of linear transformations and non-linear activations to the attention-based representations, enhancing the model's ability to capture complex patterns in the data. The feed-forward networks operate independently on each position of the sequence, allowing for parallel processing and increased computational efficiency.

Positional Encoding

Transformers do not inherently understand the order of elements in a sequence, as they lack the recurrent structure of traditional models. To address this, positional encoding is used to inject information about the position of each element into the input representations. This encoding allows the model to differentiate between elements based on their position in the sequence, enabling it to capture the sequential nature of the data.

In Practice

Natural Language Processing

In the field of natural language processing, Transformers have revolutionized tasks such as language translation and sentiment analysis. For instance, Google Translate utilizes Transformer models to convert text from one language to another with remarkable accuracy. By leveraging the attention mechanism, these models can capture the nuances of language, resulting in translations that are both contextually relevant and grammatically correct. The impact is significant, with improved translation quality and faster processing times.

Computer Vision

Transformers have also been adapted for use in computer vision tasks, demonstrating their versatility across different domains. In image recognition, for example, Transformers can analyze visual data to identify objects and patterns within images. This capability is particularly valuable in industries such as healthcare, where accurate image analysis can aid in diagnosing medical conditions. The result is enhanced diagnostic accuracy and improved patient outcomes.

Speech Recognition

In the realm of speech recognition, Transformers have been employed to improve the accuracy and efficiency of voice-to-text conversion. By capturing long-range dependencies in audio data, these models can better understand spoken language, resulting in more accurate transcriptions. This technology is widely used in applications such as virtual assistants and automated transcription services, where precise speech recognition is essential for delivering a seamless user experience.

Getting Started with Transformers

Implementing Transformer models in your projects can be a rewarding endeavor, but it requires careful planning and execution. Here are five steps to help you get started:

Understand the Basics: Familiarize yourself with the core concepts of Transformer architecture, including self-attention, encoder-decoder structure, and feed-forward networks. This foundational knowledge will be crucial as you begin to implement these models in your projects.

Select the Right Tools: Choose the appropriate tools and frameworks for your project. Libraries such as TensorFlow and PyTorch offer robust support for implementing Transformer models, providing pre-built components and resources to streamline the development process.

Gather and Prepare Data: Collect and preprocess the data needed to train your Transformer model. Ensure that your dataset is large and diverse enough to capture the nuances of the task at hand. Data augmentation techniques can also be employed to enhance the quality and variety of your training data.

Train and Fine-Tune the Model: Train your Transformer model using the prepared data, adjusting hyperparameters and fine-tuning the model to achieve optimal performance. This step may require significant computational resources, so be prepared to allocate the necessary hardware and time.

Evaluate and Deploy: Once your model is trained, evaluate its performance using relevant metrics and benchmarks. Make any necessary adjustments to improve accuracy and efficiency before deploying the model in a production environment. Consider using VideoDB as an implementation option to streamline the deployment process.

FAQ

Q: What is the difference between Transformers and traditional neural networks?

A: Transformers use self-attention mechanisms to weigh the importance of different parts of the input sequence, while traditional neural networks rely on fixed architectures. This allows Transformers to capture long-range dependencies more effectively, resulting in improved performance on tasks such as language translation and image recognition.

Q: How do Transformers handle sequential data?

A: Transformers use self-attention and positional encoding to process sequential data. Self-attention allows the model to focus on relevant parts of the input sequence, while positional encoding provides information about the order of elements. This combination enables Transformers to capture complex patterns in sequential data.

Q: What are the computational requirements for training Transformer models?

A: Training Transformer models requires significant computational resources, including powerful hardware and large amounts of data. These models are computationally intensive, necessitating the use of GPUs or TPUs to achieve optimal performance. The resource demands can lead to increased costs and environmental impact.

Q: Can Transformers be used for tasks other than NLP?

A: Yes, Transformers have been adapted for use in various domains beyond NLP, including computer vision and speech recognition. Their versatility and ability to capture complex patterns make them suitable for a wide range of applications, from image analysis to voice-to-text conversion.

Q: How can I improve the interpretability of Transformer models?

A: Improving the interpretability of Transformer models is an ongoing area of research. Techniques such as attention visualization and model distillation can help provide insights into how these models make decisions. Additionally, incorporating explainability tools and frameworks can enhance transparency and trust in the model's outputs.

Key Takeaways

Transformers have revolutionized NLP with state-of-the-art results.

Self-attention is crucial for capturing long-range dependencies.

Encoder-decoder structure facilitates tasks like language translation.

Versatility of Transformers extends to computer vision and beyond.

Implementation requires significant computational resources.