Feb 19, 2026

Why Video Infrastructure Was Built for Playback, Not AI Perception

70 years of video infrastructure optimized for human playback. Discover why YouTube, Zoom, and enterprise video systems can’t support AI perception and what needs to change.

The Playback Paradigm: 70 Years of Infrastructure

YouTube. Netflix. Zoom. Twitch. TikTok. The entire video industry was built around one fundamental assumption:

Video exists to put pixels on human eyeballs.

From the first television broadcasts in the 1940s to modern 4K streaming, every innovation in video infrastructure has optimized for the same goal: deliver frames to a human viewer who will watch them sequentially from start to finish.

The Playback Model

The entire video technology stack is built around this simple linear flow:

Every component optimizes for sequential playback:

Codecs (H.264, H.265, AV1):

Minimize bandwidth through compression

Optimize for sequential frame decoding

Prioritize visual quality for human perception

Not designed for: Random access or content queries

CDNs (Content Delivery Networks):

Cache popular content globally

Reduce latency for playback start

Handle millions of concurrent viewers

Not designed for: Semantic search or content analysis

Video Players (YouTube, VLC, etc.):

Buffer upcoming frames

Render at consistent framerate (24/30/60 fps)

Provide timeline scrubbing

Not designed for: Answering “what” questions about content

Streaming Protocols (HLS, DASH):

Adapt quality to network conditions

Handle live and on-demand playback

Minimize buffering interruptions

Not designed for: Content understanding or event detection

According to Netflix’s 2025 infrastructure report, their entire CDN architecture processes 15 petabytes daily optimized purely for playback delivery - zero investment in semantic content understanding.

“We built the internet’s video infrastructure to solve one problem: getting pixels to screens fast. We never imagined a future where machines would need to understand what those pixels mean.”

— Perspective aligned with ideas shared by Mark Levoy, Former VP of Engineering, Google

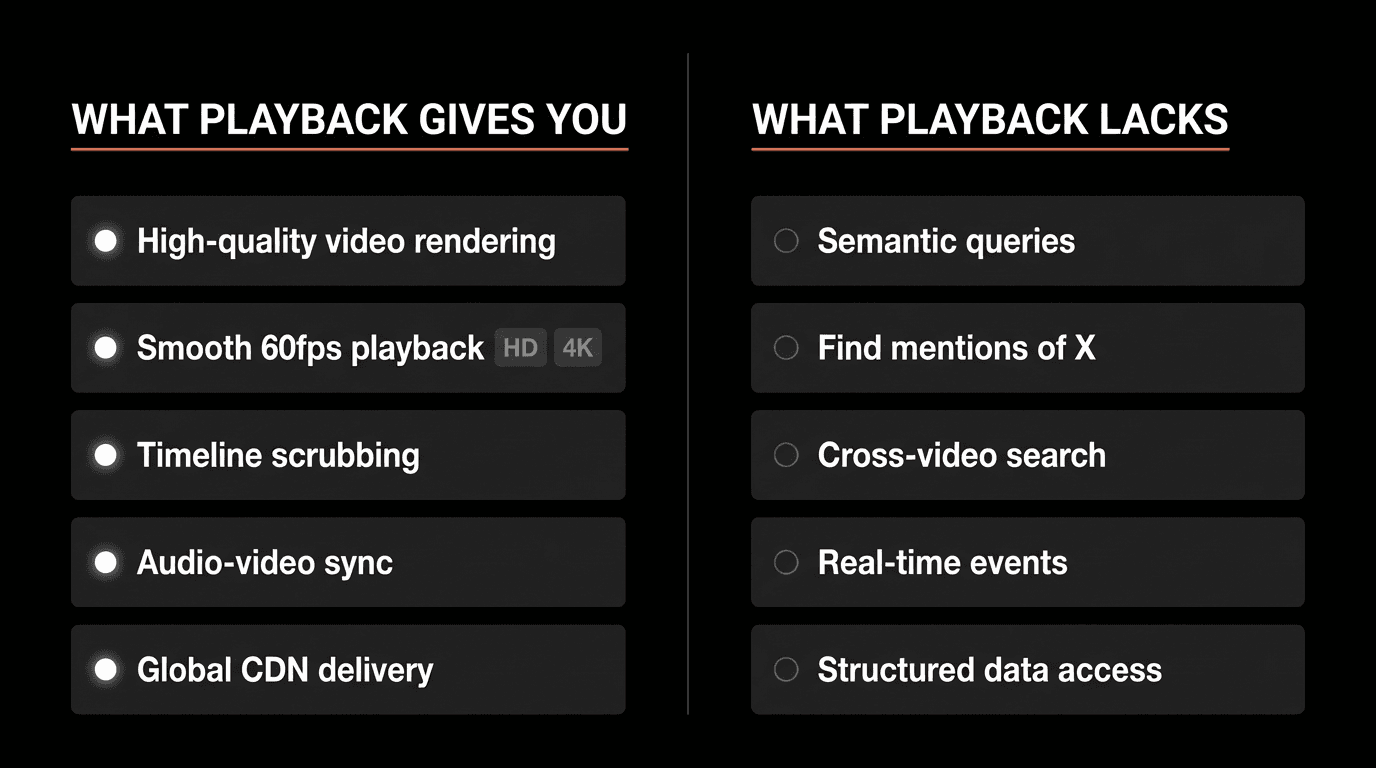

What Playback Infrastructure Provides

When you press play on a YouTube video, here’s what happens:

The Playback Experience

Step 1: CDN delivers compressed video chunks to your device

Step 2: Device decodes frames in real-time (H.264/H.265 decoding)

Step 3: Frames render at target framerate (24/30/60 fps)

Step 4: Audio streams synchronize with video

Step 5: You scrub the timeline to navigate or skip

This system works brilliantly for entertainment, education, and communication. Billions of people watch billions of hours daily.

But notice what it fundamentally doesn’t provide:

No way to query content semantically

No structured access to “what happened”

No timestamp-level retrieval by meaning

No semantic understanding

No event detection capability

No cross-video search

The video just… plays.

The Entertainment Success, AI Failure

For human viewers, playback infrastructure is a triumph:

YouTube serves 1 billion hours watched daily

Netflix streams 4K HDR with minimal buffering

Zoom handles 300 million meeting participants

TikTok delivers endless content with <1 second load times

For AI agents, it’s fundamentally broken:

Cannot search inside videos semantically

Cannot extract structured data from content

Cannot detect events in real-time

Cannot query across video archives

What AI Perception Actually Needs

AI agents don’t watch videos. They interrogate them.

The Query-First Paradigm

Instead of “play from 10:00”, agents ask:

Five Perception Requirements

1. Random Access by Content

Jump directly to relevant moments

No sequential scanning required

Semantic understanding of “what’s inside”

2. Natural Language Queries

“Show me safety violations”

“Find the product demo”

“When was the budget discussed?”

3. Instant Results

<200ms query latency across millions of hours

Real-time search, not batch processing

Scale to thousands of concurrent queries

4. Timestamped Evidence

Every answer links to exact video moment

Playable for verification

Confidence scores for reliability

5. Real-Time Event Detection

Process live streams as they happen

Alert on predefined patterns

<1 second detection latency

According to research from Stanford’s Vision Lab (2025), perception-enabled systems require 50-100x lower latency than playback systems for equivalent user value in AI applications.

The Platform Gaps: YouTube, Zoom, and Enterprise

Major video platforms excel at playback but fail completely at perception.

The YouTube Gap

YouTube is the world’s largest video library with 800 million videos and 500 hours uploaded every minute. Yet you cannot ask:

Questions YouTube Can’t Answer:

“What videos in my library mention competitor pricing?”

“Show me every product demo featuring Feature X”

“Find all mentions of ‘machine learning’ across my channel’s videos”

“When did this person appear in any of our recordings?”

What YouTube Provides:

Search titles and descriptions (text metadata)

Search automatically generated subtitles (text transcription)

Visual similarity (thumbnails)

What YouTube Lacks:

Semantic video content search

Visual scene understanding

Cross-video pattern detection

Queryable visual elements

YouTube has the content. But it has no semantic layer - no way to query what’s actually inside the videos beyond what’s been manually described or automatically transcribed.

The Zoom Gap

Zoom processes 3.3 trillion meeting minutes annually (Zoom FY2025 report). Yet you cannot ask:

Questions Zoom Can’t Answer:

“What were the action items from yesterday’s call?”

“Show me the moment the client expressed pricing concerns”

“When was the slide about Q4 projections shown?”

“Find all meetings where Product Manager mentioned the deadline”

What Zoom Provides:

Cloud recordings (MP4 files)

Audio transcription (text)

Chat logs (text)

What Zoom Lacks:

Searchable screen share content

Visual slide understanding

Sentiment detection from video

Action item extraction from visual context

Zoom recordings are opaque blobs waiting for humans to watch them at 1x speed.

Enterprise video is even more problematic. Organizations capture:

Security footage (24/7 camera feeds)

Training recordings (employee onboarding, compliance)

Customer service calls (support and sales)

Manufacturing feeds (quality control, safety)

Facility monitoring (IoT cameras, sensors)

All captured. None queryable.

According to Gartner’s 2025 Enterprise Video Survey, enterprises generate 2.5 million hours of video daily but can search only 3% effectively.

The Manual Review Problem

The standard enterprise workflow when something happens:

Day 1: Incident occurs

Day 2: Manager requests recording

Day 3: IT locates the 24-hour footage

Day 4: Human watches it at 1x speed (24 hours = 24 hours of review)

Day 5: They manually note timestamps of relevant moments

Day 7: Summary report delivered

Result:

7-day response time

Requires dedicated human hours

Doesn’t scale beyond isolated incidents

Completely incompatible with AI automation

“We have 10,000 hours of training videos and no way to find the 5 minutes explaining the specific process an employee needs right now. So they watch random videos hoping to find it, or we re-record the same content.”

— Perspective aligned with ideas shared by Learning & Development Director, Fortune 500 Manufacturing

Perception-First Architecture

What if video infrastructure was rebuilt from the ground up for perception instead of playback?

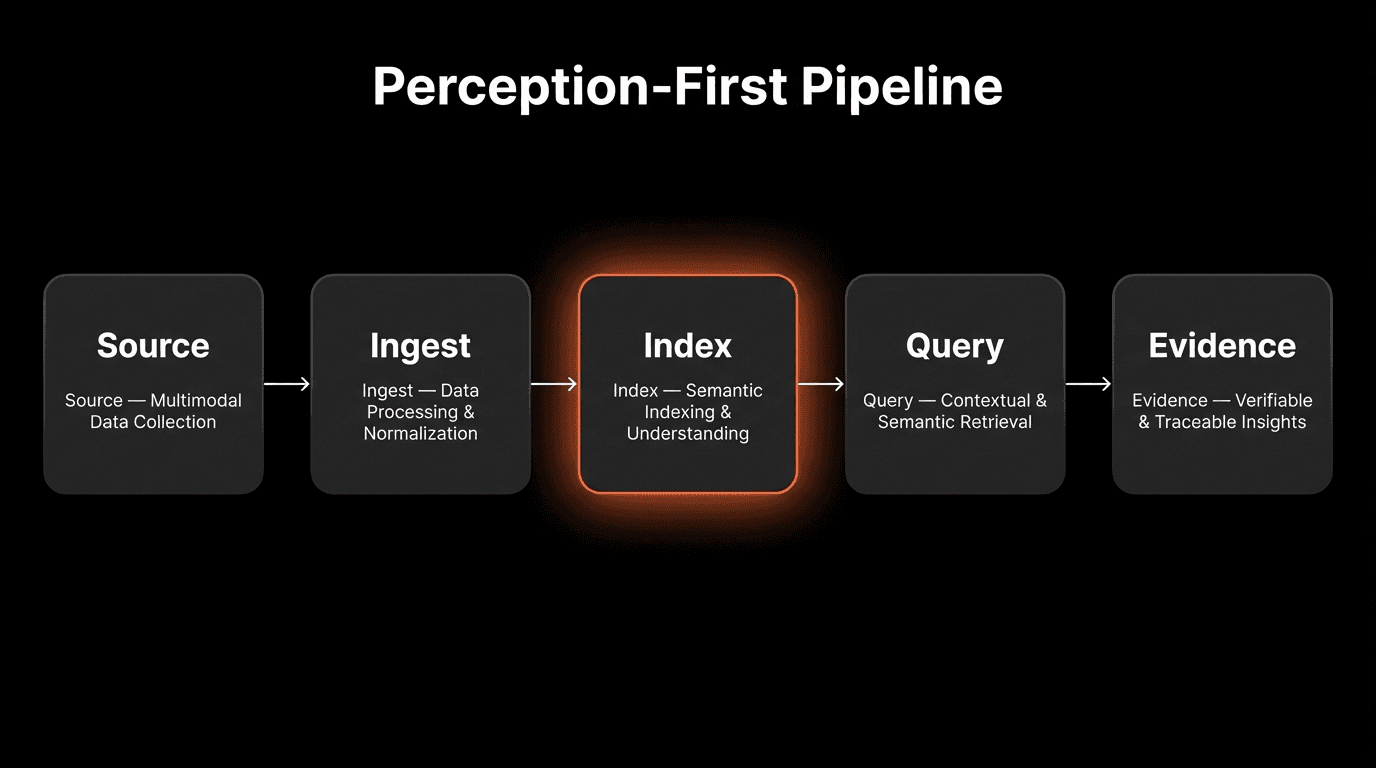

The New Pipeline

Every component optimizes for understanding, not just playback:

1. Ingest - Unified Input

Normalize media from any source (files, streams, screens)

Handle multiple formats and protocols

Real-time or batch processing

Goal: Make all video accessible to indexing

2. Index - Semantic Layer

Extract meaning using prompt-defined understanding

Build searchable scene-level representations

Maintain temporal relationships

Goal: Convert video to queryable knowledge

3. Query - Natural Language Search

Semantic search across indexed content

Return timestamped relevant moments

<200ms latency at scale

Goal: Instant answers to natural language questions

4. Evidence - Verifiable Results

Link results to exact video timestamps

Provide playable verification

Include confidence scores

Goal: Grounded, verifiable answers

From “Play” to “Answer”

The paradigm shift:

Playback Command | Perception Query |

|---|---|

“Play the recording” | “What happened at 2pm?” |

“Skip to 10:00” | “Find the product demo” |

“Watch this video” | “Search across all videos” |

“Download the file” | “Give me relevant clips only” |

“Scrub the timeline” | “Show me every mention of X” |

Perception turns video from a thing you watch into a thing you query.

Real-Time Perception: Beyond Batch Processing

The playback model assumes recordings. You capture first, then watch later.

Perception works in real-time for live streams:

Live Stream Perception Example

Real-time Alert Structure

Key Difference from Batch

No recording delay — events detected as they occur

<1 second latency from event to alert

No waiting for processing to complete

Continuous awareness, not retrospective analysis

According to MIT’s Real-Time Systems Lab (2025), perception-enabled monitoring reduces mean time to detection by 99.97% compared to manual video review (hours/days → <1 second).

The Infrastructure Shift

For 70 years, video infrastructure optimized for:

Human-Centric Goals:

High visual fidelity (4K, 8K, HDR)

Low latency playback (<2 second buffering)

Global distribution (CDNs worldwide)

Human consumption (entertainment, communication)

The next era optimizes for:

AI-Centric Goals:

Semantic understanding (what’s happening, not just pixels)

Instant queryability (<200ms across millions of hours)

Real-time event detection (<1 second latency)

Machine consumption (queries, not viewers)

Performance Comparison

Metric | Playback Infra | Perception Infra |

|---|---|---|

Query latency | N/A (must watch) | <200ms |

Event detection | N/A (manual review) | <1 second |

Search scope | Titles/descriptions | Inside video content |

Scale | Viewers | Queries per second |

Primary user | Human eyes | AI agents |

FAQs

Q: Why was video infrastructure built for playback instead of perception?

A: Video infrastructure evolved from 1940s television broadcasting through 2000s internet streaming, optimized for one goal: delivering frames to human viewers. The technology stack (codecs, CDNs, players) was designed when the only use case was humans watching content sequentially. AI perception wasn’t a conceivable requirement.

Q: Can existing platforms like YouTube add perception capabilities?

A: Theoretically yes, but it requires fundamental architectural changes. YouTube’s infrastructure is optimized for playback delivery (CDN caching, streaming protocols) not semantic indexing. Adding perception would mean building a parallel index layer across 800 million videos, representing billions in infrastructure investment.

Q: What’s the main difference between playback and perception architecture?

A: Playback architecture moves pixels from source to screen (encode→distribute→decode→display). Perception architecture extracts meaning from video (ingest→index→query→evidence). Playback answers “show me video,” perception answers “what happened when?”

Q: Why can’t Zoom search inside meeting recordings?

A: Zoom provides playback files and audio transcription but no visual understanding layer. Searching inside recordings requires indexing screen shares, slides, whiteboard content, and participant actions - none of which Zoom’s playback-focused infrastructure captures or indexes.

Q: How much enterprise video footage goes unsearched?

A: According to Gartner’s 2025 survey, enterprises can effectively search only 3% of captured video. The remaining 97% exists as files requiring manual review. Organizations generate 2.5 million hours of video daily with no semantic access layer.

Q: What enables real-time perception vs batch processing?

A: Real-time perception uses stream-based indexing that processes video incrementally as it flows, detecting events with <1 second latency. Batch processing waits for complete files, then processes frame-by-frame, requiring hours before content becomes queryable.

Q: Can perception infrastructure still support playback?

A: Yes. Perception-first architecture maintains original video for playback verification. The difference is adding an index layer for queries while preserving playback capability. You can both query semantically AND play back results for verification.

Q: What’s the cost difference between playback and perception infrastructure?

A: Playback infrastructure costs are dominated by CDN bandwidth and storage. Perception adds indexing compute (typically $5-10 per hour of video) but reduces operational costs by 80-90% through automated search versus manual review.

Q: Why does perception matter for enterprise video?

A: Enterprises capture security footage, training videos, customer calls, and manufacturing feeds but cannot query them. Perception enables: “Show safety violations,” “Find product mentions,” “When was this discussed?” - transforming captured video from storage liability to queryable asset.

Q: What industries benefit most from perception-first video?

A: Industries with large video archives and real-time monitoring needs: security (threat detection), manufacturing (quality control), customer service (support analysis), healthcare (procedure review), legal (deposition search), and media (content search/editing).

Key Takeaways

The Playback Legacy:

• 70 years of infrastructure optimized for sequential playback to human viewers

• YouTube, Netflix, Zoom excel at buffering and rendering, fail at content queries

• Entire stack (codecs, CDNs, players, protocols) designed for delivery, not understanding

• Result: Billions watch billions of hours, but AI can’t query what’s inside

The Platform Gaps:

• YouTube: 800M videos, searchable only by titles/descriptions, not content

• Zoom: 3.3T meeting minutes annually, recordings are opaque blobs requiring manual review

• Enterprise: 2.5M hours daily, only 3% effectively searchable, 97% requires human review

• Manual review doesn’t scale: 7-day incident response, 24 hours to review 24 hours of footage

Perception-First Architecture:

• New pipeline: Source → Ingest → Index → Query → Evidence

• Index layer extracts semantic meaning, enables <200ms natural language queries

• Real-time capable: events detected in <1 second, not hours/days later

• Transforms video from “thing you watch” to “thing you query”

The Infrastructure Shift:

• Old goal: High fidelity playback for human viewers (4K, low latency, global CDN)

• New goal: Semantic understanding for AI agents (<200ms queries, real-time events)

• 99.97% faster detection (hours/days → <1 second with real-time perception)

• 80-90% cost reduction through automation vs manual review

Why It Matters:

• AI agents interrogate video, they don’t watch it

• Playback infrastructure has no answers for “What happened at 2pm?”

• Perception infrastructure is being built from scratch for AI-first future

• Video is being rebuilt - not for human eyes, but for machine understanding